How fundamentally difficult is a problem? That’s the basic task of computer scientists who hope to sort problems into what are called complexity classes. These are groups that contain all the computational problems that require less than some fixed amount of a computational resource—something like time or memory. Take a toy example featuring a large number such as 123,456,789,001. One might ask: Is this number prime, divisible only by 1 and itself? Computer scientists can solve this using fast algorithms—algorithms that don’t bog down as the number gets arbitrarily large. In our case, 123,456,789,001 is not a prime number. Then we might ask: What are its prime factors? Here no such fast algorithm exists—not unless you use a quantum computer. Therefore computer scientists believe that the two problems are in different complexity classes.

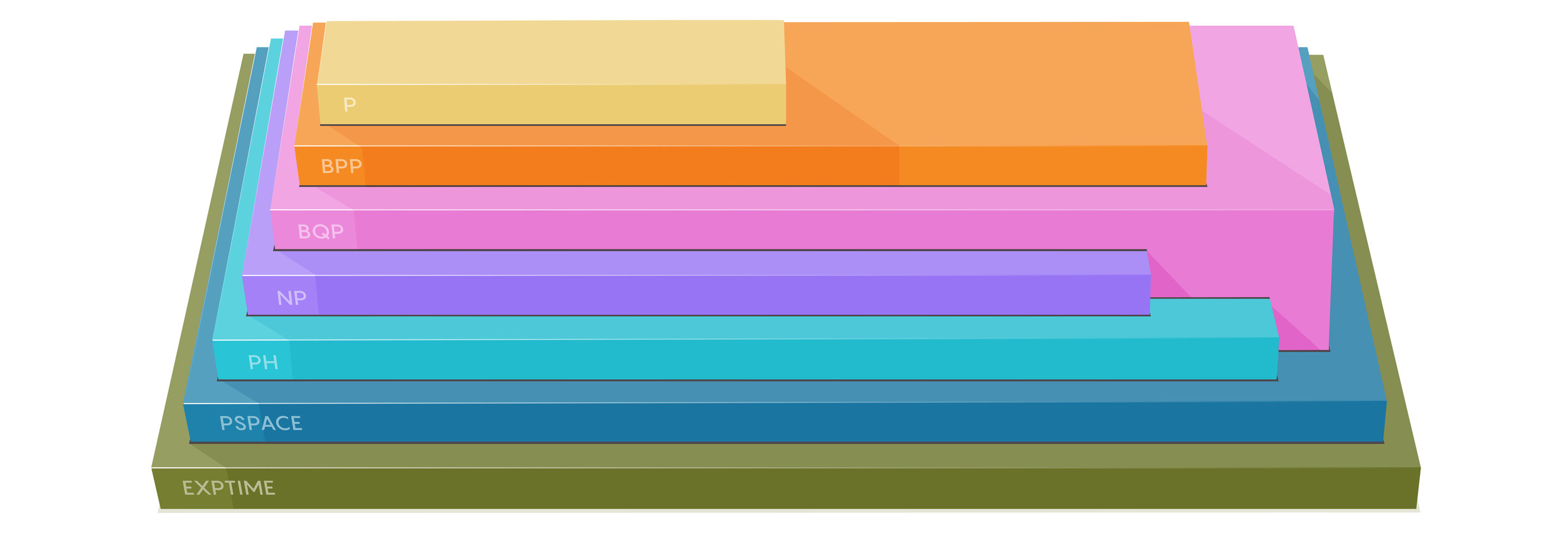

Many different complexity classes exist, though in most cases researchers haven’t been able to prove one class is categorically distinct from the others. Proving those types of categorical distinctions is among the hardest and most important open problems in the field. That’s why the new result I wrote about last month in Quanta was considered such a big deal: In a paper published at the end of May, two computer scientists proved (with a caveat) that the two complexity classes that represent quantum and classical computers really are different.

The differences between complexity classes can be subtle or stark, and keeping the classes straight is a challenge. For that reason, Quanta has put together this primer on seven of the most fundamental complexity classes. May you never confuse BPP and BQP again.

P

Stands for: Polynomial time

Short version: All the problems that are easy for a classical (meaning nonquantum) computer to solve.

Precise version: Algorithms in P must stop and give the right answer in at most nc time where n is the length of the input and c is some constant.

Typical problems:

• Is a number prime?

• What’s the shortest path between two points?

What researchers want to know: Is P the same thing as NP? If so, it would upend computer science and render most cryptography ineffective overnight. (Almost no one thinks this is the case.)

NP

Stands for: Nondeterministic Polynomial time

Short version: All problems that can be quickly verified by a classical computer once a solution is given.

Precise version: A problem is in NP if, given a “yes” answer, there is a short proof that establishes the answer is correct. If the input is a string, X, and you need to decide if the answer is “yes,” then a short proof would be another string, Y, that can be used to verify in polynomial time that the answer is indeed “yes.” (Y is sometimes referred to as a “short witness”—all problems in NP have “short witnesses” that allow them to be verified quickly.)

Typical problems:

• The clique problem. Imagine a graph with edges and nodes—for example, a graph where nodes are individuals on Facebook and two nodes are connected by an edge if they’re “friends.” A clique is a subset of this graph where all the people are friends with all the others. One might ask of such a graph: Is there a clique of 20 people? 50 people? 100? Finding such a clique is an “NP-complete” problem, meaning that it has the highest complexity of any problem in NP. But if given a potential answer—a subset of 50 nodes that may or may not form a clique—it’s easy to check.

• The traveling salesman problem. Given a list of cities with distances between each pair of cities, is there a way to travel through all the cities in less than a certain number of miles? For example, can a traveling salesman pass through every U.S. state capital in less than 11,000 miles?

What researchers want to know: Does P = NP? Computer scientists are nowhere near a solution to this problem.

PH

Stands for: Polynomial Hierarchy

Short version: PH is a generalization of NP—it contains all the problems you get if you start with a problem in NP and add additional layers of complexity.

Precise version: PH contains problems with some number of alternating “quantifiers” that make the problems more complex. Here’s an example of a problem with alternating quantifiers: Given X, does there exist Y such that for every Z there exists W such that R happens? The more quantifiers a problem contains, the more complex it is and the higher up it is in the polynomial hierarchy.

Typical problem:

• Determine if there exists a clique of size 50 but no clique of size 51.

What researchers want to know: Computer scientists have not been able to prove that PH is different from P. This problem is equivalent to the P versus NP problem because if (unexpectedly) P = NP, then all of PH collapses to P (that is, P = PH).

PSPACE

Stands for: Polynomial Space

Short version: PSPACE contains all the problems that can be solved with a reasonable amount of memory.

Precise version: In PSPACE you don’t care about time, you care only about the amount of memory required to run an algorithm. Computer scientists have proven that PSPACE contains PH, which contains NP, which contains P.

Typical problem:

• Every problem in P, NP, and PH is in PSPACE.

What researchers want to know: Is PSPACE different from P?

BQP

Stands for: Bounded-error Quantum Polynomial time

Short version: All problems that are easy for a quantum computer to solve.

Precise version: All problems that can be solved in polynomial time by a quantum computer.

Typical problems:

• Identify the prime factors of an integer.

What researchers want to know: Computer scientists have proven that BQP is contained in PSPACE and that BQP contains P. They don’t know whether BQP is in NP, but they believe the two classes are incomparable: There are problems that are in NP and not BQP and vice versa.

EXPTIME

Stands for: Exponential Time

Short version: All the problems that can be solved in an exponential amount of time by a classical computer.

Precise version: EXP contains all the previous classes—P, NP, PH, PSPACE, and BQP. Researchers have proven that it’s different from P—they have found problems in EXP that are not in P.

Typical problem:

• Generalizations of games like chess and checkers are in EXP. If a chess board can be any size, it becomes a problem in EXP to determine which player has the advantage in a given board position.

What researchers want to know: Computer scientists would like to be able to prove that PSPACE does not contain EXP. They believe there are problems that are in EXP that are not in PSPACE, because sometimes in EXP you need a lot of memory to solve the problems. Computer scientists know how to separate EXP and P.

BPP

Stands for: Bounded-error Probabilistic Polynomial time

Short version: Problems that can be quickly solved by algorithms that include an element of randomness.

Precise version: BPP is exactly the same as P, but with the difference that the algorithm is allowed to include steps where its decision-making is randomized. Algorithms in BPP are required only to give the right answer with a probability close to 1.

Typical problem:

• You’re handed two different formulas that each produce a polynomial that has many variables. Do the formulas compute the exact same polynomial? This is called the polynomial identity testing problem.

What researchers want to know: Computer scientists would like to know whether BPP = P. If that is true, it would mean that every randomized algorithm can be de-randomized. They believe this is the case—that there is an efficient deterministic algorithm for every problem for which there exists an efficient randomized algorithm—but they have not been able to prove it.

Lead image: The various “complexity classes” sort problems into something like a hierarchy: One class might contain all the problems of another, plus problems that require additional computational resources. Credit: Lucy Reading-Ikkanda/Quanta Magazine