Today’s most successful artificial intelligence algorithms, artificial neural networks, are loosely based on the intricate webs of real neural networks in our brains. But unlike our highly efficient brains, running these algorithms on computers guzzles shocking amounts of energy: The biggest models consume nearly as much power as five cars over their lifetimes.

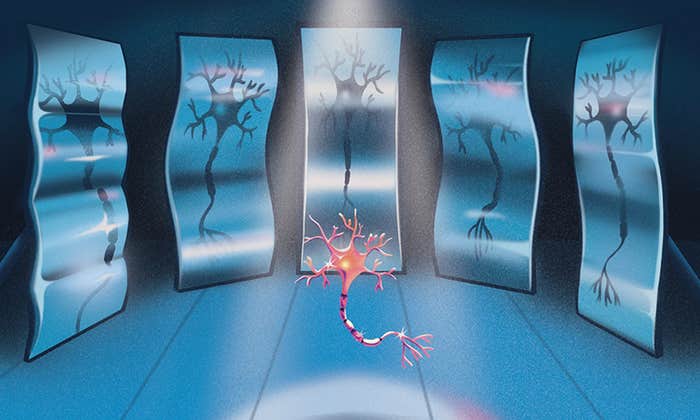

Enter neuromorphic computing, a closer match to the design principles and physics of our brains that could become the energy-saving future of AI. Instead of shuttling data over long distances between a central processing unit and memory chips, neuromorphic designs imitate the architecture of the jelly-like mass in our heads, with computing units (neurons) placed next to memory (stored in the synapses that connect neurons). To make them even more brain-like, researchers combine neuromorphic chips with analog computing, which can process continuous signals, just like real neurons. The resulting chips are vastly different from the current architecture and computing mode of digital-only computers that rely on binary signal processing of 0s and 1s.

With the brain as their guide, neuromorphic chips promise to one day demolish the energy consumption of data-heavy computing tasks like AI. Unfortunately, AI algorithms haven’t played well with the analog versions of these chips because of a problem known as device mismatch: On the chip, tiny components within the analog neurons are mismatched in size due to the manufacturing process. Because individual chips aren’t sophisticated enough to run the latest training procedures, the algorithms must first be trained digitally on computers. But then, when the algorithms are transferred to the chip, their performance breaks down once they encounter the mismatch on the analog hardware.

“In analog lies the beauty of the brain’s core computations.”

Now, a paper published in January 2022 in the Proceedings of the National Academy of Sciences has finally revealed a way to bypass this problem. A team of researchers led by Friedemann Zenke at the Friedrich Miescher Institute for Biomedical Research and Johannes Schemmel at Heidelberg University showed that an AI algorithm known as a spiking neural network—which uses the distinctive communication signal of the brain, known as a spike—could work with the chip to learn how to compensate for device mismatch. The paper is a significant step toward analog neuromorphic computing with AI.

“The amazing thing is that it worked so well,” said Sander Bohte, a spiking neural network expert at CWI, the national research institute for mathematics and computer science in the Netherlands. “It is quite an achievement and likely a blueprint for more with analog neuromorphic systems.”

The importance of analog computing to brain-based computing is subtle. Digital computing can effectively represent one binary aspect of the brain’s spike signal, an electrical impulse that shoots through a neuron like a lightning bolt. As with a binary digital signal, either the spike is sent out, or it’s not. But spikes are sent over time continuously—it’s an analog signal—and how our neurons decide to send out a spike in the first place is also continuous, based on a voltage inside the cell that changes over time. (When the voltage reaches a specific threshold compared to the voltage outside the cell, the neuron sends a spike.)

“In analog lies the beauty of the brain’s core computations. Emulating this key aspect of the brain is one of the main drivers of neuromorphic computing,” said Charlotte Frenkel, a neuromorphic engineering researcher at the University of Zurich and ETH Zurich.

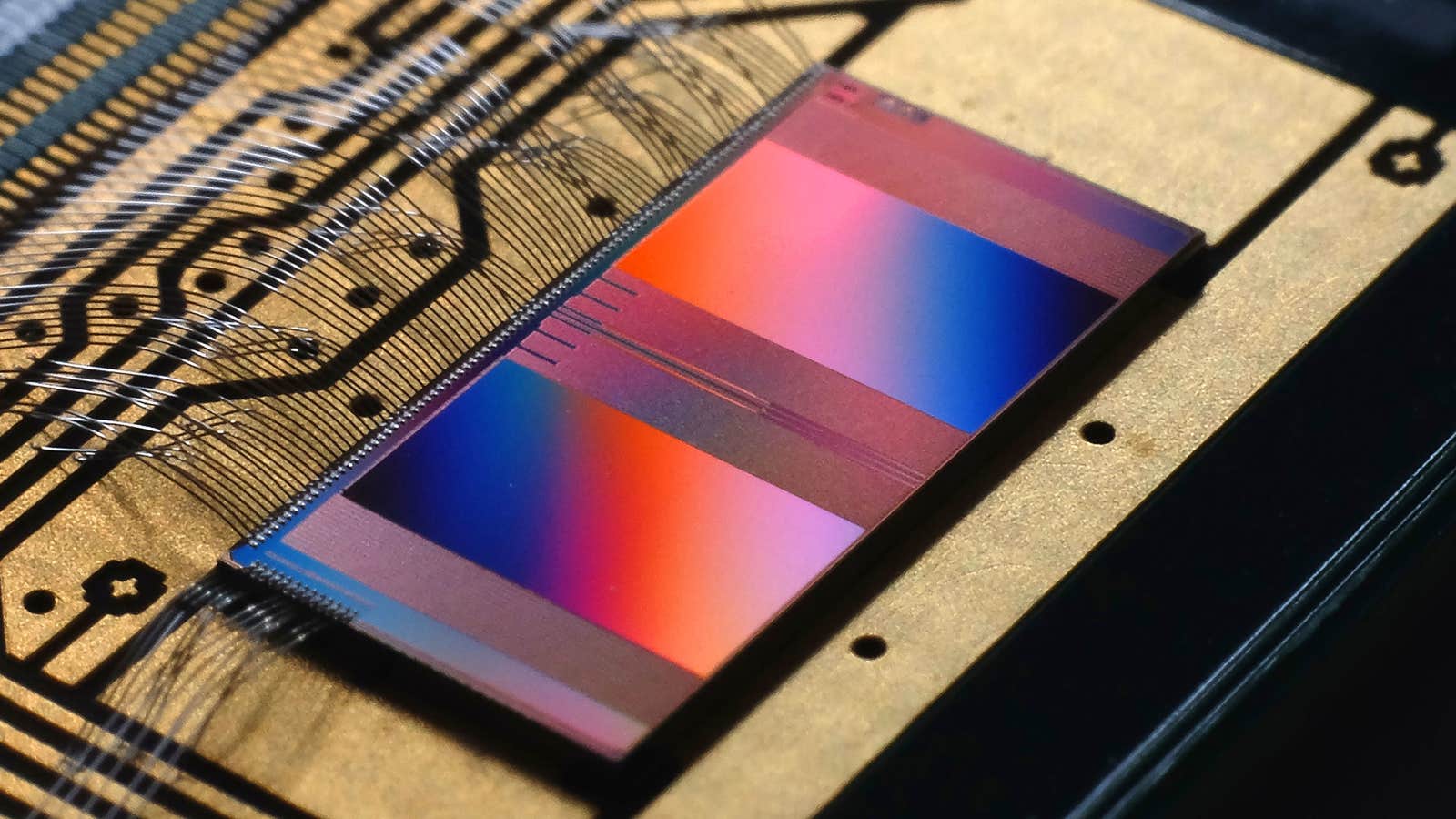

In 2011, a group of researchers at Heidelberg University began developing a neuromorphic chip with both analog and digital aspects to closely model the brain for neuroscience experiments. Now led by Schemmel, the team has unveiled the latest version of the chip, dubbed BrainScaleS-2. Every analog neuron on the chip mimics a brain cell’s incoming and outgoing currents and voltage changes.

“You really have a dynamical system that is continuously exchanging information,” said Schemmel. And because the materials have different electrical properties, the chip transfers information 1,000 times faster than our brains.

But since the analog neurons’ properties vary ever so slightly—the device mismatch problem—the voltages and levels of current also varies across neurons. The algorithms can’t handle this, since they were trained on computers with perfectly identical digital neurons, and suddenly their on-chip performance plummets.

The work shows a way forward. By including the chip in the training process, the authors showed that spiking neural networks could learn how to correct for the varying voltages on the BrainScaleS-2 chip. “This training setup is one of the first convincing proofs that variability can not only be compensated [for], but also likely exploited,” Frenkel said.

To deal with device mismatch, the team combined an approach that allows the chip to talk to the computer with a new learning method called surrogate gradients, co-developed by Zenke specifically for spiking neural networks. It works by changing the connections between neurons to minimize how many errors a neural network makes in a task. (This is similar to the method used by non-spiking neural networks, called backpropagation.)

Effectively, the surrogate gradient method was able to correct for the chip’s imperfections during training on the computer. First, the spiking neural network performs a simple task using the varying voltages of the analog neurons on the chip, sending recordings of the voltages back to the computer. There, the algorithm automatically learns how to best alter connections between its neurons to still play nicely with the analog neurons, continuously updating them on the chip while it learns. Then, when the training is complete, the spiking neural network performs the task on the chip. The researchers report that their network reached the same level of accuracy on a speech and vision task as the top spiking neural networks performing the task on computers. In other words, the algorithm learned exactly what changes it would need to make to overcome the device mismatch problem.

“The performance they achieved to do a real problem with this system is a big accomplishment,” said Thomas Nowotny, a computational neuroscientist at the University of Sussex. And, as expected, they do so with impressive energy efficiency; the authors say that running their algorithm on the chip consumed about 1,000 times less energy than a standard processor would require.

However, Frenkel points out that while the energy consumption is good news so far, neuromorphic chips will still need to prove themselves against hardware that has been optimized for similar speech and vision recognition tasks, rather than standard processors. And Nowotny cautions that the approach may have trouble scaling up to large practical tasks, since it still requires shuttling the data back and forth between computer and chip.

The long-term goal is for spiking neural networks to train and run on neuromorphic chips from start to finish, without any need for a computer at all. But that would require building a new chip generation, which takes years, Nowotny said.

For now, Zenke and Schemmel’s team is happy to show that spiking neural network algorithms can handle the minuscule variations between analog neurons on neuromorphic hardware. “You can rely on 60 or 70 years of experience and software history for digital computing,” Schemmel said. “For this analog computing, we have to do everything on our own.”

Lead image: The BrainScaleS-2 neuromorphic chip, developed by neuromorphic engineers at Heidelberg University, uses tiny circuits that mimic the analog computing of the real neurons in our brains. Credit: Heidelberg University

This article was originally published on the Quanta Abstractions blog.