Every day there seems to be a new headline: Yet another scientific luminary warns about how recklessly fast companies are creating more and more advanced forms of AI, and the great dangers this tech poses to humanity.

We share many of the concerns people like AI researchers Geoffrey Hinton, Yoshua Bengio, and Eliezer Yudkowsky; philosopher Nick Bostrom; cognitive scientist Douglas Hofstadter, and others have expressed about the risks of failing to regulate or control AI as it becomes exponentially more intelligent than human beings. This is known as “the control problem,” and it is AI’s “hard problem.”

Once AI is able to improve itself, it will quickly become much smarter than us on almost every aspect of intelligence, then a thousand times smarter, then a million, then a billion … What does it mean to be a billion times more intelligent than a human? Well, we can’t know, in the same way that an ant has no idea what it’s like to have a mind like Einstein’s. In such a scenario, the best we can hope for is benign neglect of our presence. We would quickly become like ants at its feet. Imagining humans can control superintelligent AI is a little like imagining that an ant can control the outcome of an NFL football game being played around it.

Humans won’t be able to anticipate what a far-smarter entity plans to do.

Why do we think this? In mathematics, science, and philosophy, it is helpful to first categorize problems as solvable, partially solvable, unsolvable, or undecidable. It’s our belief that if we develop AIs capable of improving their own abilities (known as recursive self-improvement), there can, in fact, be no solution to the control problem. One of us (Roman Yampolskiy) made the case for this in a recent paper published in the Journal of Cyber Security and Mobility. It is possible to demonstrate that a solution to the control problem does not exist.

The thinking goes like this: Controlling superintelligent AI will require a toolbox with certain capabilities, such as being able to explain an AI’s choices, predict which choices it will make, and verify these explanations and predictions. Today’s AI systems are “black box” by nature: We cannot explain—and nor can the AI—why the AI makes any particular decision or output. Nor can we accurately and consistently predict what specific actions a superintelligent system will take to achieve its goals, even if we know the ultimate goals of the system. (If we could know what a superintelligence would do to achieve its goals, this would require that our intelligence be on par with the AI’s.)

Since we cannot explain how a black-box intelligence makes its decisions, or predict what those decisions will be, we’re in no position to verify our own conclusions about what the AI is thinking or plans on doing.

More generally it is becoming increasingly obvious that, just as we can only have probabilistic confidence in the proofs mathematicians come up with and the software computer engineers implement, our ability to explain, predict, and verify AI agents is, at best, limited. And when the stakes are so incredibly high, it is not acceptable to have only probabilistic confidence on these control and alignment issues.

The precautionary principle is a long-standing approach for new technologies and methods that urges positive proof of safety before real-world deployment. Companies like OpenAI have so far released their tools to the public with no requirements at all to establish their safety. The burden of proof should be on companies to show that their AI products are safe—not on public advocates to show that those same products are not safe.

Recursively self-improving AI, the kind many companies are already pursuing, is the most dangerous kind, because it may lead to an intelligence explosion some have called “the singularity,” a point in time beyond which it becomes impossible to predict what might happen because AI becomes god-like in its abilities. That moment could happen in the next year or two, or it could be a decade or more away.

Humans won’t be able to anticipate what a far-smarter entity plans to do or how it will carry out its plans. Such superintelligent machines, in theory, will be able to harness all of the energy available on our planet, then the solar system, then eventually the entire galaxy, and we have no way of knowing what those activities will mean for human well-being or survival.

Can we trust that a god-like AI will have our best interests in mind? Similarly, can we trust that human actors using the coming generations of AI will have the best interests of humanity in mind? With the stakes so incredibly high in developing superintelligent AI, we must have a good answer to these questions—before we go over the precipice.

Once AI is able to improve itself, it will quickly become much smarter than us.

Because of these existential concerns, more scientists and engineers are now working toward addressing them. For example, the theoretical computer scientist Scott Aaronson recently said that he’s working with OpenAI to develop ways of implementing a kind of watermark on the text that the company’s large language models, like GPT-4, produce, so that people can verify the text’s source. It’s still far too little, and perhaps too late, but it is encouraging to us that a growing number of highly intelligent humans are turning their attention to these issues.

Philosopher Toby Ord argues, in his book The Precipice: Existential Risk and the Future of Humanity, that in our ethical thinking and, in particular, when thinking about existential risks like AI, we must consider not just the welfare of today’s humans but the entirety of our likely future, which could extend for billions or even trillions of years if we play our cards right. So the risks stemming from our AI creations need to be considered not only over the next decade or two, but for every decade stretching forward over vast amounts of time. That’s a much higher bar than ensuring AI safety “only” for a decade or two.

Skeptics of these arguments often suggest that we can simply program AI to be benevolent, and if or when it becomes superintelligent, it will still have to follow its programming. This ignores the ability of superintelligent AI to either reprogram itself or to persuade humans to reprogram it. In the same way that humans have figured out ways to transcend our own “evolutionary programming”—caring about all of humanity rather than just our family or tribe, for example—AI will very likely be able to find countless ways to transcend any limitations or guardrails we try to build into it early on.

Can’t we simply “pull the plug” on superintelligent AI if we start having second thoughts? No. AI will be cloud-based and stored in multiple copies, so it wouldn’t be clear what exactly to unplug. Plus, a superintelligent AI would anticipate every way possible for it to be unplugged and find ways of countering any attempt to cut off its power, due either to its mission to accomplish its goals optimally, or due to its own goals that it develops over time as it achieves superintelligence.

Some thinkers, including influential technologists and scholars like Yann LeCun (META’s top AI developer and a Turing Prize winner), have said, “AI is intrinsically good,” so they view the creation of superintelligent AI as almost necessarily resulting in far better outcomes for humanity. But the history of our own species and others on this planet suggests that any time a much stronger entity encounters weaker entities, the weaker are generally crushed or subjugated. Buddhist monk Soryu Forall, who has been meditating on the risks of AI and leading techpreneurs in retreats for many years, said it well in a recent piece in The Atlantic: Humanity has “exponentially destroyed life on the same curve as we have exponentially increased intelligence.”

Philosopher Nick Bostrom and others have also warned of “the treacherous turn” scenario where AI may “play nice,” concealing its true intelligence or intentions until it is too late—then all safeguards humans have put in place may be shrugged off like a giant shrugging off the threads of the Lilliputians.

AI pioneer Hugo de Garis warned, in his 2005 book The Artilect War, about the potential for world war stemming from debates about the wisdom (or lack thereof) in developing advanced AI—even before such AI comes around. It is also all but certain that advanced nations will turn to AI weapons and AI-controlled robots in fighting future wars. Where does this new arms race end? Every sane person of course wishes that such conflicts do not come about, but we have fought world wars over far, far less.

Indeed, the United States military and many other nations are already developing advanced AI weaponry such as automated weaponized drones (one kind of “lethal autonomous weapons”). There is also a broad push already to limit any further development of such weapons.

Safer AI is better than unfettered AI.

Meanwhile, many AI researchers and firms seem to be working at their own cross purposes. OpenAI, the group behind GPT-4, recently announced a significant “superalignment initiative.” This is a large effort, committing at least 20 percent of OpenAI’s monumental computing power and resources, to solving the alignment problem in the next four years. Unfortunately, we know already, under the logic we’ve described above, that it cannot. Nothing in OpenAI’s announcement or related literature addresses the question we posed above: Is the alignment problem solvable in principle? We have suggested it is not.

If OpenAI had instead announced it was ceasing all work on developing new and more powerful large language models like GPT-5, and would devote 100 percent of its computing power and resources to solving the alignment problem for AIs with limited capabilities, we would support this move. But as long as development of artificial general intelligence (AGI) continues, at OpenAI and the dozens of other companies now working on AGI, it is all but certain that AGI will escape humanity’s control before long.

Given these limitations, the best AI we can hope for is “safer AI.” One of us (Tam Hunt) is developing a “philosophical heuristic imperatives” model that hopes to bias AI toward better outcomes for humanity (Forall is pursuing a similar idea independently, as is Elon Musk, with the goal of his newly announced X.ai venture being “to understand the universe”).

To achieve that, we should imbue at least some AIs with a fundamental benevolence and goals that emphasize finding answers to the great philosophical questions that will very likely never have a final answer—and to recognize that humanity, and all other life forms, have continuing value as part of that forever mystery.

Even if this approach could work to inculcate life-preserving values into some AI, however, there is no way to guarantee that all AIs would be taught this way. And there is no way to avoid these AIs, at a later date, somehow undoing these imperatives instilled early in their lifespan.

Safer AI is better than unfettered AI, but it still amounts, in our view, to playing Russian roulette with humanity—but with a live round in almost every chamber.

The wiser option is to pause, now, while we collectively consider the consequences of what we are doing with AI. There’s no hurry to develop superintelligence. With the stakes so high, it is imperative that we proceed with the utmost caution. ![]()

Tam Hunt is a public policy lawyer and philosopher based in Hawaii. He blogs at tamhunt.medium.com and his website is tam-hunt.com.

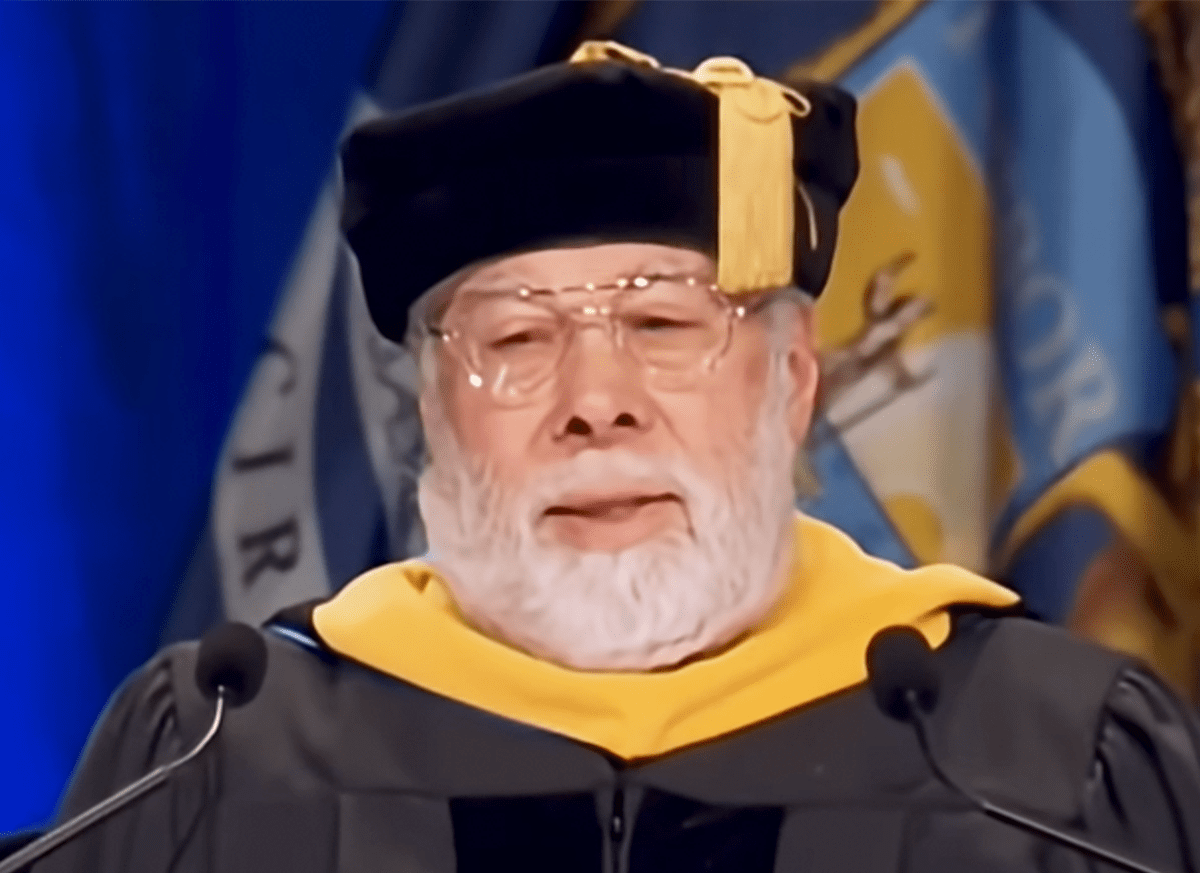

Roman Yampolskiy is a computer scientist at the University of Louisville, known for his work on AI Safety.

Lead image: Ole.CNX / Shutterstock