How did we humans manage to build a global civilization on the cusp of colonizing other planets? It seems like such an unlikely outcome. After all, we were prone to cycles of war and famine for millennia, and have a meager capacity for society-wide planning and coordination—among other problems.

Maybe it’s our unique capacity for complex language and story-telling, which allow us to learn in groups; or our ability to extend our capabilities through technology; or political and religious institutions we have created. However, perhaps the most significant answer is something else entirely: code. Humanity has survived, and thrived, by developing productive activities that evolve into regular routines and standardized platforms—which is to say we have survived, and thrived, by creating and advancing code.

The word “code” derives from the Latin codex, meaning “a system of laws.” Today “code” is used in various distinct contexts—computer code, genetic code, cryptologic code (such as Morse code), ethical code, building code, and so forth—each of which has a common feature: They all contain instructions that describe a process. Computer code requires the action of a compiler, energy, and (usually) inputs in order to become a useful program. Genetic code requires expression through the selective action of enzymes to produce proteins or RNA, ultimately producing a unique phenotype. Cryptologic code requires decryption. Ethical codes, legal codes, and building codes all require processes of interpretation in order to be converted into action.

The first recipes—code at work—literally made humans what we are today.

“Code” as I intend it incorporates elements of computer code, genetic code, cryptologic code, and other forms as well. But, as I describe in my book The Code Economy: A Forty-Thousand Year History, published this year, it also stands as its own concept—the algorithms that guide production in the economy—for which no adequate word yet exists. Code can include instructions we follow consciously and purposively, and those we follow unconsciously and intuitively. Code can be understood tacitly, it can be written, or it can be embedded in hardware. Code can be stored, transmitted, received, and modified. Code captures the algorithmic nature of instructions as well as their evolutionary character.

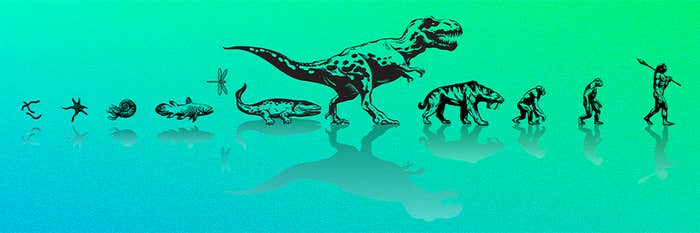

The word that comes closest to “code” in this economic context is “recipe.” There has been code in production literally since the first time a human being prepared food. How important has this capacity been to human advance? Substantial anthropological research suggests that culinary recipes were the earliest and among the most transformative technologies employed by humans. We have understood for some time that cooking accelerated human evolution by substantially increasing the nutrients absorbed in the stomach and small intestine. However, recent research suggests that human ancestors were using recipes to prepare food to dramatic effect as early as 2 million years ago—even before we learned to control fire and cooking became common, which occurred about 500,000 years ago. Simply slicing meats and pounding tubers (such as yams), as was done by our earliest ancestors, turns out to yield digestive advantages that are comparable to those realized by cooking. Cooked or raw, increased nutrient intake enabled us to evolve smaller teeth and chewing muscles and even a smaller gut than our ancestors or primate cousins. These evolutionary adaptations in turn supported the development of humans’ larger, energy-hungry brain.

The first recipes—code at work—literally made humans what we are today.

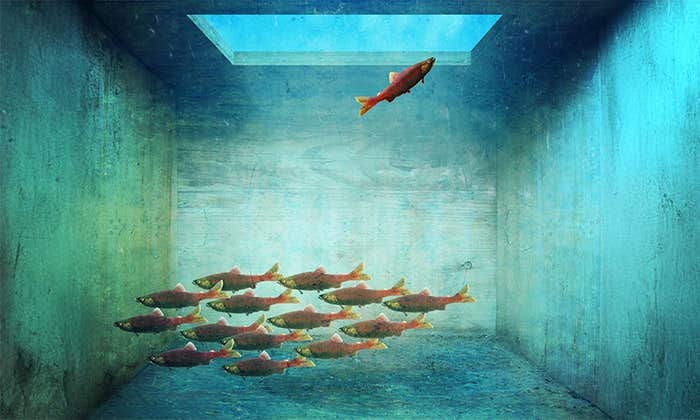

In the economy, raw materials are like diatoms, amoebas, or plankton in the biological food chain, whereas standardized platforms are like complex multicellular organisms. As code advances, higher-level technologies feed on more fundamental technologies in much the same way more complex organisms feed on simpler organisms in the food chain. Platforms provide essential structures for the code economy: The infrastructure that underlies a city is a standardized platform. Written language is a standardized platform. The Internet is a standardized platform. Human civilization has thus advanced through the creation and improvement of code, which is built on layers of platforms that accumulate like the pipes and tunnels that lie below a great city.

In the past 200 years, the complexity of code has increased by orders of magnitude. Death rates began to fall rapidly in the middle of the 19th century, due to a combination of increased agricultural output, improved hygiene, and the beginning of better medical practices—all different dimensions of the advance of code. Accordingly, the population grew. As newly invented machines and improved practices (again, read “code”) reduced the need for manual labor in agriculture, urbanization rapidly intensified and humanity’s cognitive surplus increased. Greater numbers of people living in greater density than ever before accelerated the advance of code.

By the 20th century, the continued advance of code seemed to necessitate the creation of government bureaucracies and large corporations that employed vast numbers of people. These organizations executed code of sufficient complexity that it was beyond the capacity of any single individual to master. To structure work within such large, complex organizations, humans began to define occupations in terms of specific task-defined roles rather than by artisanal trades, as had been the case throughout human history. We came to call these task-defined roles “jobs.” Jobs were very different from the trades, in that they were designed to optimize institutional operations rather than to perpetuate and advance inherited, mostly unwritten production practices. In this way, the artisans, serfs, and merchants who defined the medieval agrarian economy were replaced by an industrial economic order dominated by workers who executed the subroutines of complex algorithms performed by large corporate entities.

Two broad categories of epochal change occurred as a result of this evolution of the economy from simplicity to complexity. One is that our capabilities grew, individually and collectively. For instance, we can now fly. (I am encoding these very words while moving far above the clouds at a speed many times faster than the fastest chariot, employing a highly evolved abacus known as a computer.) We can carry on conversations with people anywhere around the world. By consuming small quantities of a serum made from mold we can defeat microscopic “armies” that attack our bodies. We can use eggs to make a delicious sauce known as mayonnaise. But that’s not all.

The second epochal change related to the advance of code is that we have, to an increasing degree, ceded to other people—and to code itself—authority and autonomy, which for millennia we had kept unto ourselves and our immediate tribal groups as uncodified cultural norms. We now obey written laws and rules. We follow instructions. We respect elected officials (in our actions if not always our thoughts) and the elected officials respect electoral processes (in their words if not always their actions). We do our jobs. We no longer have our own wells or, in most cases, our own gardens. Most of us (myself included) have forgotten how to hunt. We depend for our survival on an ever-growing array of services provided by others, who in turn are ceding an increasing amount of their authority to code. As Nick Diakopoulos, the director of the Computational Journalism Lab at the University of Maryland, has pointed out, this has spurred a new kind of journalism, what he calls “algorithmic accountability reporting.” He says it “seeks to articulate the power structures, biases, and influences that computational artifacts play in society.”

Code is at once a force, or a means, of liberation and constraint. Its advance is perhaps as integral to the unfolding of human history as every head of state has been, combined. We cannot understand the dynamics of the economy—its past or its future—without understanding code.

Philip Auerswald is the author of The Code Economy: A Forty-Thousand-Year History, from which this post is adapted. He is also an Associate Professor of Public Policy at George Mason University and the Co-founder and Co-editor of Innovations, a quarterly journal about entrepreneurial solutions to global challenges.

WATCH: The M.I.T. theoretical computer scientist Scott Aaronson on how the computer changed the meaning of information.

Image credit: Donnie Ray Jones / Flickr