Last week I was typing away, working on a story, when a noisy video ad popped up on my screen. “Shut up already!” I growled. Suddenly, Siri, who I didn’t realize I had activated, chimed in with the tone of an older sister. “That’s not nice!” she admonished me. I felt kind of … strange. I was being uncivilized, which I shouldn’t be with any entity. But did I just hurt a machine’s feelings? Should I apologize?

Of course, I can see the absurdity of fretting about a machine’s feelings. I’m an adult.

But in recent years many researchers began to ask how kids relate to the omnipresence of voice-controlled smart devices such as Alexa, Siri, and Google Home. These questions are becoming even more pressing as AI devices progress from mere conveniences that play music or tell weather into companions for the elderly, interactive buddies for the youngsters, and even teaching assistants who help caregivers improve social skills in children with autism. Yet there’s a catch to how these gadgets interact with people that may have particularly pronounced effects on children who are still learning the basic rules of social etiquette.

“The last thing I want my kids to think is that they have to say ‘please’ to a surveillance system.”

That has prompted a slew of questions from scientists. Do kids differentiate AI from people? Should they use “please” and “thank you” when speaking to the AIs? If they don't, are they learning they don’t have to be polite to elicit help or interaction? How is that shaping their social and emotional development, and their capacity for empathy and compassion? Do Alexa and company hamper children’s emotional IQ—the crucial capabilities every human needs to form friendships, tell right from wrong, and generally be successful in life? Should we worry about smart devices dumbing down our kids, socially?

Guess what—neither Alexa, nor Siri nor Google Home can fetch the answer.

The gadgets certainly don’t yet foster civility well, points out Anmol Arora, an academic physician at the University of Cambridge who studies artificial intelligence. In human interactions, if a child forgets to say the magic word, they would usually be reminded to use it. “But it’s beyond the scope of a smart device,” says Arora, who recently co-authored an opinion piece on the subject in Archives of Disease in Childhood. Amazon did equip Alexa with an optional “Magic Word” function in 2018 that makes the AI acknowledge proper mannerisms; Google Home began offering a similar function the same year, called “Pretty Please”; and my experience with Siri let me know Apple can have ears on etiquette, too.

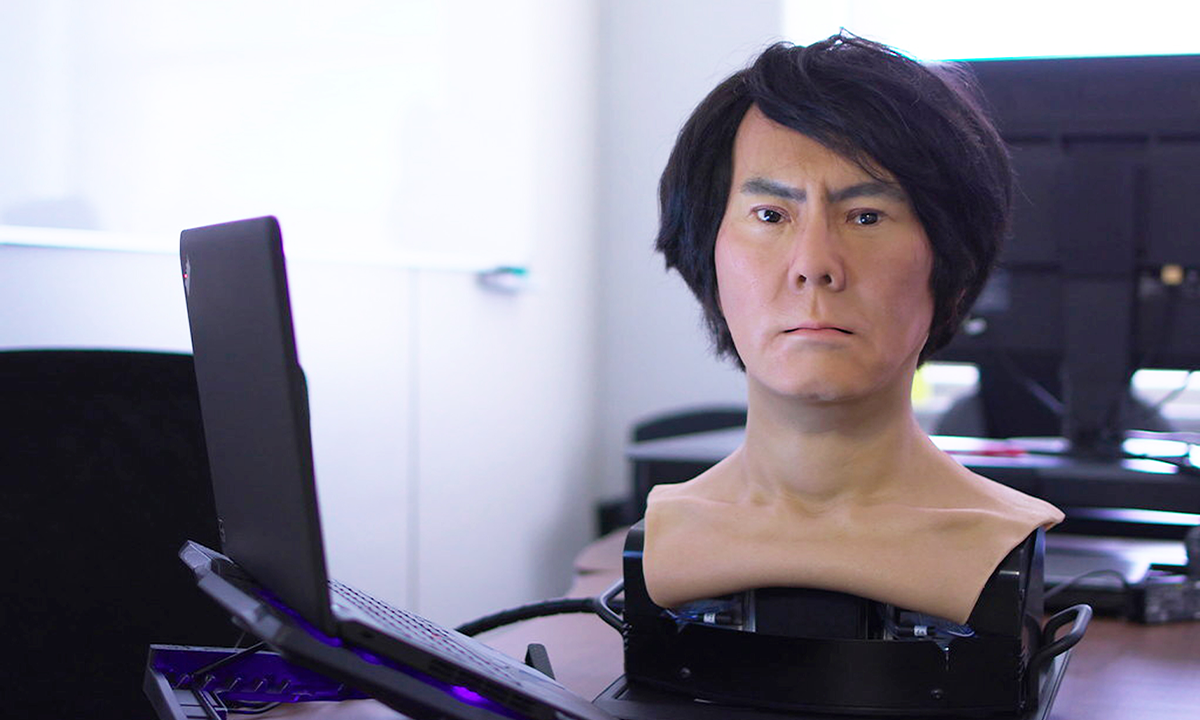

Even if these virtual assistants can swoop in as manners police, there are other problems. The AI devices can respond to commands and supply information, but they still can’t foster a meaningful interaction during which learning happens, Arora points out. When children ask questions of adults, usually a conversation follows. The adult explains things or challenges or encourages the kid’s reasoning through an evolving dialogue. The smart devices aren’t smart enough to do that—instead they fetch the requested info, often without context. Neither do they encourage the kids to think critically about the information, nor tell right from wrong—all crucial actions in establishing an informed perspective. “There’s an element of the conversation which is missing when kids go to the devices,” Arora says.

Do kids differentiate AI from people?

The assistants also don’t critically assess the information they fetch. One scary example of this was when, in 2021, Alexa suggested to a 10-year-old girl to “plug in a phone charger about halfway into a wall outlet, then touch a penny to the exposed prongs.” The AI did this because it had been asked for a “challenge to do.” The so-called “penny challenge” had been circulating on TikTok and other social media, so Alexa fetched because it was a linguistic match, but without any filter. Afterward, Amazon said it updated Alexa to fix the bug, but the incident was a chilling demonstration of the AI’s reasoning limits.

And yet, that’s not what most worries Jordan Shapiro, associate professor at Temple University, education fellow at the Brookings Institution, and author of the book The New Childhood: Raising Kids To Thrive in a Connected World. Shapiro sees the problems of kid-AI communication in a different light.

Alexa and Siri won’t dumb down our kids emotionally because they are not raising them, he says. These devices are simply a different form of media. We don’t typically expect television, video games, or the internet to be wholesale substitutes for teaching our kids manners, critical thinking, and basic safety rules. The voice devices are yet another, more sophisticated version of such media. They provide information and entertain, but they aren’t a replacement for the thinking and rational adults in kids’ lives.

Shapiro also thinks that children can tell the difference between a human and a voice coming from an electronic box, even if their impressive natural language abilities throw off us adults from time to time. “I tend to give kids a lot more credit and assume that they’re capable of ‘code switching’ and knowing that they’re interacting [with a device], but they don’t really think it’s a human,” he says. “Certainly, the concern about unfiltered content seems reasonable to me,” he adds, but what truly worries him is something else.

His biggest concerns are privacy and information sourcing. The voice gadgets are, even just during their active listening moments, gathering huge databases of information. This information becomes a very valuable asset, whether for marketing or other reasons, and it’s important to teach the youngsters this essential nuance. When we interact with voice gadgets, we are not talking to other humans or even to faithful assistants, but to technology that keeps tabs on us, typically for commercial gain. “So my provocative take was always this,” Shapiro says. “The last thing I want my kids to think is that they have to say ‘please’ to a surveillance system.”

So kids should learn not thanking a smart device may not be that dumb, after all. And I shouldn’t worry about offending Siri. ![]()

Lina Zeldovich grew up in a family of Russian scientists, listening to bedtime stories about volcanoes, black holes, and intrepid explorers. She has written for The New York Times, Scientific American, Reader’s Digest, and Audubon Magazine, among other publications, and won four awards for covering the science of poop. Her book, The Other Dark Matter: The Science and Business of Turning Waste into Wealth, was published in 2021 by Chicago University Press. You can find her at LinaZeldovich.com and @LinaZeldovich.

Lead image: Sharomka / Shutterstock