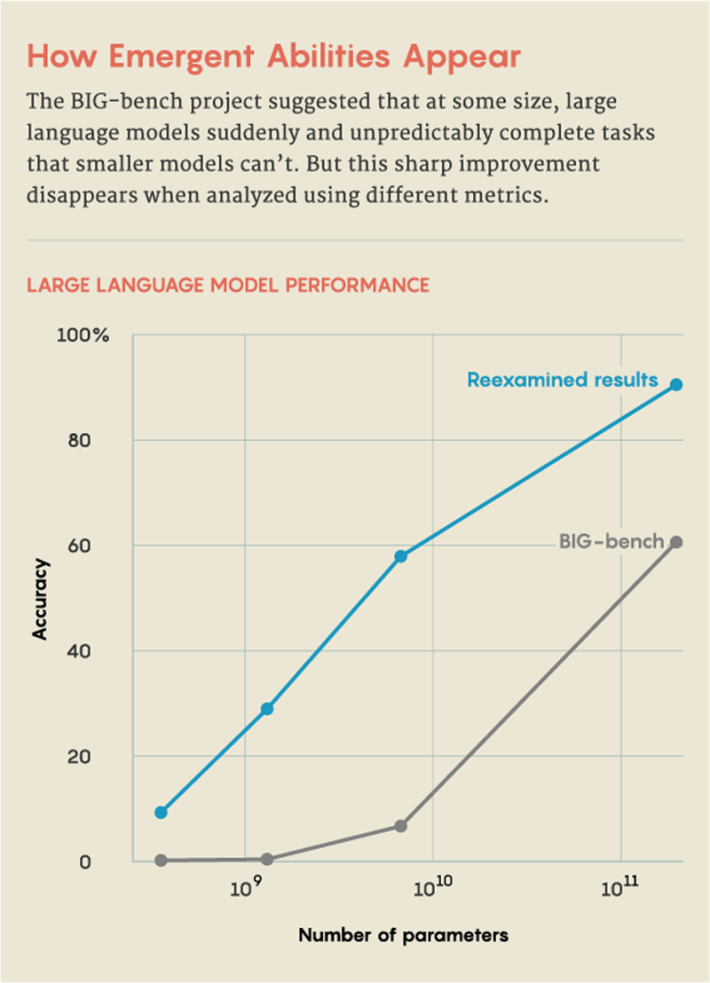

Two years ago, in a project called the Beyond the Imitation Game benchmark, or BIG-bench, 450 researchers compiled a list of 204 tasks designed to test the capabilities of large language models, which power chatbots like ChatGPT. On most tasks, performance improved predictably and smoothly as the models scaled up—the larger the model, the better it got. But with other tasks, the jump in ability wasn’t smooth. The performance remained near zero for a while, then performance jumped. Other studies found similar leaps in ability.

The authors described this as “breakthrough” behavior; other researchers have likened it to a phase transition in physics, like when liquid water freezes into ice. In a paper published in August 2022, researchers noted that these behaviors are not only surprising but unpredictable, and that they should inform the evolving conversations around AI safety, potential, and risk. They called the abilities “emergent,” a word that describes collective behaviors that only appear once a system reaches a high level of complexity.

But things may not be so simple. A new paper by a trio of researchers at Stanford University posits that the sudden appearance of these abilities is just a consequence of the way researchers measure the LLM’s performance. The abilities, they argue, are neither unpredictable nor sudden. “The transition is much more predictable than people give it credit for,” said Sanmi Koyejo, a computer scientist at Stanford and the paper’s senior author. “Strong claims of emergence have as much to do with the way we choose to measure as they do with what the models are doing.”

We’re only now seeing and studying this behavior because of how large these models have become. Large language models train by analyzing enormous datasets of text—words from online sources including books, web searches, and Wikipedia—and finding links between words that often appear together. The size is measured in terms of parameters, roughly analogous to all the ways that words can be connected. The more parameters, the more connections an LLM can find. GPT-2 had 1.5 billion parameters, while GPT-3.5, the LLM that powers ChatGPT, uses 350 billion. GPT-4, which debuted in March 2023 and now underlies Microsoft Copilot, reportedly uses 1.75 trillion.

That rapid growth has brought an astonishing surge in performance and efficacy, and no one is disputing that large enough LLMs can complete tasks that smaller models can’t, including ones for which they weren’t trained. The trio at Stanford who cast emergence as a “mirage” recognize that LLMs become more effective as they scale up; in fact, the added complexity of larger models should make it possible to get better at more difficult and diverse problems. But they argue that whether this improvement looks smooth and predictable or jagged and sharp results from the choice of metric—or even a paucity of test examples—rather than the model’s inner workings.

Three-digit addition offers an example. In the 2022 BIG-bench study, researchers reported that with fewer parameters, both GPT-3 and another LLM named LAMDA failed to accurately complete addition problems. However, when GPT-3 trained using 13 billion parameters, its ability changed as if with the flip of a switch. Suddenly, it could add—and LAMDA could, too, at 68 billion parameters. This suggests that the ability to add emerges at a certain threshold.

But the Stanford researchers point out that the LLMs were judged only on accuracy: Either they could do it perfectly, or they couldn’t. So even if an LLM predicted most of the digits correctly, it failed. That didn’t seem right. If you’re calculating 100 plus 278, then 376 seems like a much more accurate answer than, say, −9.34.

So instead, Koyejo and his collaborators tested the same task using a metric that awards partial credit. “We can ask: How well does it predict the first digit? Then the second? Then the third?” he said.

Koyejo credits the idea for the new work to his graduate student Rylan Schaeffer, who he said noticed that an LLM’s performance seems to change with how its ability is measured. Together with Brando Miranda, another Stanford graduate student, they chose new metrics showing that as parameters increased, the LLMs predicted an increasingly correct sequence of digits in addition problems. This suggests that the ability to add isn’t emergent—meaning that it undergoes a sudden, unpredictable jump—but gradual and predictable. They find that with a different measuring stick, emergence vanishes.

But other scientists point out that the work doesn’t fully dispel the notion of emergence. For example, the trio’s paper doesn’t explain how to predict when metrics, or which ones, will show abrupt improvement in an LLM, said Tianshi Li, a computer scientist at Northeastern University. “So in that sense, these abilities are still unpredictable,” she said. Others, such as Jason Wei, a computer scientist now at OpenAI who has compiled a list of emergent abilities and was an author on the BIG-bench paper, have argued that the earlier reports of emergence were sound because for abilities like arithmetic, the right answer really is all that matters.

“There’s definitely an interesting conversation to be had here,” said Alex Tamkin, a research scientist at the AI startup Anthropic. The new paper deftly breaks down multistep tasks to recognize the contributions of individual components, he said. “But this is not the full story. We can’t say that all of these jumps are a mirage. I still think the literature shows that even when you have one-step predictions or use continuous metrics, you still have discontinuities, and as you increase the size of your model, you can still see it getting better in a jump-like fashion.”

And even if emergence in today’s LLMs can be explained away by different measuring tools, it’s likely that won’t be the case for tomorrow’s larger, more complicated LLMs. “When we grow LLMs to the next level, inevitably they will borrow knowledge from other tasks and other models,” said Xia “Ben” Hu, a computer scientist at Rice University.

This evolving consideration of emergence isn’t just an abstract question for researchers to consider. For Tamkin, it speaks directly to ongoing efforts to predict how LLMs will behave. “These technologies are so broad and so applicable,” he said. “I would hope that the community uses this as a jumping-off point as a continued emphasis on how important it is to build a science of prediction for these things. How do we not get surprised by the next generation of models?”

This article was originally published on the Quanta Abstractions blog.

Lead image: Kristina Armitage/Quanta Magazine