In April of 2000, the journal Anesthesia & Analgesia published a letter to its editor from Peter Kranke and two colleagues that was fairly dripping with sarcasm. The trio of academic anesthesiologists took aim at an article published by a Japanese colleague named Yoshitaka Fujii, whose data on a drug to prevent nausea and vomiting after surgery were, they wrote, “incredibly nice.”

In the language of science, calling results “incredibly nice” is not a compliment—it’s tantamount to accusing a researcher of being cavalier, or even of fabricating findings. But rather than heed the warning, the journal, Anesthesia & Analgesia, punted. It published the letter to the editor, together with an explanation from Fujii, which asked, among other things, “how much evidence is required to provide adequate proof?” In other words, “Don’t believe me? Tough.” Anesthesia & Analgesia went on to publish 11 more of Fujii’s papers. One of the co-authors of the letter, Christian Apfel, then of the University of Würzburg, in Germany, went to the United States Food and Drug Administration to alert them to the issues he and his colleagues had raised. He never heard back.

Fujii, perhaps recognizing his good luck at being spared more scrutiny, mostly stopped publishing in the anesthesia literature in the mid-2000s. Instead, he focused on ophthalmology and otolaryngology, fields in which his near miss would be less likely to draw attention. By 2011, he had published more than 200 studies in total, a very healthy output for someone in his field. In December of that year, he published a paper in the Journal of Anesthesia. It was to be his last.

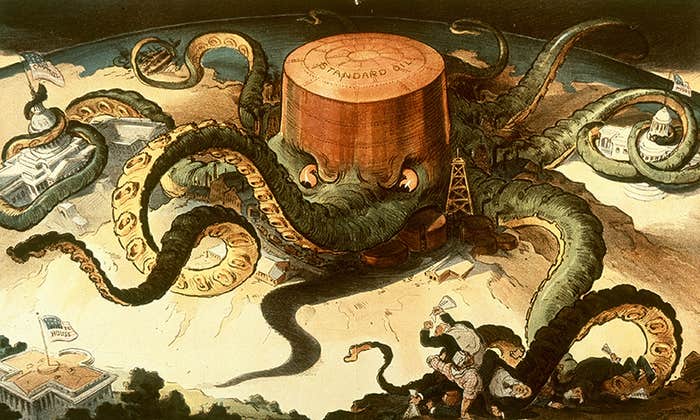

Over the next two years, it became clear that he had fabricated much of his research—most of it, in fact. Today he stands alone as the record-holder for most retractions by a single author, at a breathtaking 183, representing roughly 7 percent of all retracted papers between 1980 and 2011. His story represents a dramatic fall from grace, but also the arrival of a new dimension to scholarly publishing: Statistical tools that can sniff out fraud, and the “cops” that are willing to use them.

Steve Yentis already had a fairly strong background in research ethics when he became editor in chief of the journal Anaesthesia in 2009. He’d sat on and then chaired an academic committee on the subject and even gone back to school part-time for a master’s degree in medical ethics. He’d also been serving on the Committee for Publication Ethics, or COPE, an international group based in the United Kingdom devoted to improving standards in scholarly publishing.

Still, he said, he had “no real inkling that the ceiling was about to fall in” so soon after he assumed the journal’s top post. Like Anesthesia & Analgesia a decade earlier, Anaesthesia published a 2010 editorial about Fujii’s corpus of work by a group of authors that did not believe in its findings, and called on the field to conduct reviews of the literature to weed out bogus results.

As Yentis detailed in a later article, titled “Lies, damn lies, and statistics,” the editorial—which he himself had commissioned—prompted a flood of letters, including one by a reader “who bemoaned the fact that the evidence base remained distorted by that researcher’s work” and challenged anaesthetic journal editors to do something about it. The author of that letter was John Carlisle, a U.K. anesthetist.

The timing was fortuitous in a way. The field of anesthesiology was still reeling from an epic one-two punch of misconduct. The first hit involved Scott Reuben, a Massachusetts pain specialist who’d fabricated data in clinical trials—and wound up in federal prison for his crimes. The second, barely a year later, came in the form of Joachim Boldt, a prolific German researcher who was found to have doctored studies and committed other ethics violations that led to the retraction of nearly 90 papers.

If it seems too good to be true, math will tell you it

probably is.

Anaesthesia had published six of Boldt’s articles, and Yentis felt a bit chastened. So when he read Carlisle’s letter he saw an opportunity. He effectively told Carlisle to put his money where his keyboard was: “I counter-challenged the correspondent to perform an analysis of Fujii’s work,” Yentis says. Carlisle admits that he had no special expertise in statistics at the time, nor was he a particularly well-known anesthesiologist whose word carried weight among his colleagues. But his conclusion was simple, and impossible to ignore—it was extremely unlikely that a real set of experiments could have produced Fujii’s data.

Top-tier evidence in clinical medicine comes from randomized controlled trials, which are essentially statistical egg sorters that separate chance from the legitimate effects of a drug or other treatment. “The measurements that are usually analyzed are those subsequent to one group having a treatment and the other group having a placebo,” Carlisle explains. “The two unusual things that I did were to analyze differences between groups of variables that exist before the groups are given a treatment or a placebo (for instance weight), and to calculate the probability that differences would be less than observed, not more than observed.”

Carlisle compared the findings from 168 of Fujii’s “gold standard” clinical trials from 1991 to 2011 (an eyebrow-raising average of about eight papers a year) with what other investigators had previously reported, and what would be expected to occur by chance. He looked at factors ranging from patient height and blood pressure at the start of a study to rates of side effects associated with the drugs Fujii was reportedly testing.

Using these techniques, Carlisle concluded in a paper he published in Anaesthesia in 2012 that the odds of some of Fujii’s findings being experimentally derived were on the order of 10-33, a hideously small number. As Carlisle dryly explained, there were “unnatural patterns” that “would support the conclusion that these data depart from those that would be expected from random sampling to a sufficient degree that they should not contribute to the evidence base.” In other words: If it seems too good to be true, math will tell you it probably is.

Carlisle’s conclusion was much like that of the anesthesiologists who called out Fujii in 2000—only this time, people paid attention. Shortly after Carlisle’s findings appeared, a Japanese investigation concluded that only three of 212 published papers by Fujii contained clearly reliable data. For 38 others, evidence of fraud was inconclusive. Eventually 171 papers were deemed to have been wholly fabricated. As the Japanese report concluded: “It is as if someone sat at a desk and wrote a novel about a research idea.”

Carlisle’s statistical analysis technique isn’t limited to anesthesia, or even to science about people. “The method I used could be applied to anything, plant, animal, or mineral,” he says. “It requires only that the people, plants, or objects have been randomly allocated to different groups.” It would also be “fairly simple” for other scholarly journals to implement it.

At least one journal editor agrees. “It is still evolving, but John Carlisle’s basic approach is being generalized as a tool to detect research fraud,” says Steven Shafer, a Stanford anesthesiologist and current editor in chief of Anesthesia & Analgesia. Shafer, Yentis, and others are involved in that effort, and Carlisle plans to publish a revised methodology soon. One of the goals, Shafer says, is to automate the process. (Disclaimer: Shafer is on the board of directors of the Center for Scientific Integrity, home to Retraction Watch, which the authors of this article co-founded.)

Shafer says he personally used Carlisle’s method to identify a paper about insertion of breathing tubes, submitted to his journal in 2012, as fraudulent. He rejected it, then learned that it had been submitted to another journal. “Same paper, different data!” says Shafer. “The authors invented new numbers, since I implied in my rejection letters it was a fraudulent paper.” Shafer and his fellow editor sent a note to the authors’ department chair, who responded, “These people won’t be doing research anymore.”

Nor is the technique limited to catching fraudsters. Carlisle says groups like the Cochrane Collaboration—a U.K. body that conducts reviews and meta-analyses of the medical literature—could potentially use it to check the reliability of any pooled result. These meta-analyses, which pool findings from numerous studies of a particular intervention, such as drugs or surgery, are considered powerful evidence-based ways to inform treatment guidelines. If the data in the studies on which they’re based is flawed, however, you end up with “garbage in, garbage out,” making them useless.

But this is an approach that requires journal editors to be on board—and many of them are not. Some find reasons not to fix the literature. Authors, for their part, have taken to claiming that they are victims of “witch hunts.” It often takes a chorus of critiques on sites such as PubPeer.com, which allows anonymous comments on published papers, followed by press coverage, to generate any movement.

In 2009, for example, Bruce Ames—made famous by the tests for cancer-causing agents that bear his name—performed an analysis similar to Carlisle’s together with his colleagues. The target was a group of three papers authored by a team led by Palaninathan Varalakshmi. In marked contrast to what later resulted from Carlisle’s work, the three researchers fought back, calling Ames’ approach “unfair” and a conflation of causation and correlation. Varalakshmi’s editors sided with him. To this day, not a single one of the journals in which the accused researchers have published their work have done anything about the papers in question.

Sadly, this is the typical conclusion to a scholarly fraud investigation. The difficulty in pursuing fraudsters is partly the result of the process of scholarly publishing itself. It “has always been reliant on people rather than systems; the peer review process has its pros and cons but the ability to detect fraud isn’t really one of its strengths,” Yentis says.

Publishing is built on trust, and peer reviewers are often too rushed to look at original data even when it is made available. Nature, for example, asks authors “to justify the appropriateness of statistical tests and in particular to state whether the data meet the assumption of the tests,” according to executive editor Veronique Kiermer. Editors, she notes, “take this statement into account when evaluating the paper but do not systematically examine the distribution of all underlying datasets.” Similarly, peer reviewers are not required to examine dataset statistics.

When Nature went through a painful stem cell paper retraction last year, which led to the suicide of one of the key researchers, they maintained that, “we and the referees could not have detected the problems that fatally undermined the papers.” The journal argued that it took post-publication peer review, and an institutional investigation. And pushing too hard can create real problems, Nature wrote in another editorial. Journals “might find themselves threatened with a lawsuit for the proposed retraction itself, let alone a retraction whose statement includes any reference to misconduct.”

Nature might be content to leave the heavy-duty police work to institutions, but Yentis has learned his lesson. While he commissioned the 2010 editorial that brought the red flags in Fujii’s work to the attention of his readers, he also all but ignored the editorial’s message. It took a brace of letters—including one from his eventual sleuth, Carlisle—to goad him into action, and as a result he didn’t publish the definitive analysis until 2012. “Were such an accusation to appear in an editorial now,” he says, “I wouldn’t let it go by me.”

Adam Marcus, the managing editor of Gastroenterology & Endoscopy News, and Ivan Oransky, global editorial director of MedPage Today, are the co-founders of Retraction Watch, generously supported by the John D. and Catherine T. MacArthur Foundation.