Optimization problems can be tricky, but they make the world work better. These kinds of questions, which strive for the best way of doing something, are absolutely everywhere. Your phone’s GPS calculates the shortest route to your destination. Travel websites search for the cheapest combination of flights that matches your itinerary. And machine learning applications, which learn by analyzing patterns in data, try to present the most accurate and humanlike answers to any given question.

For simple optimization problems, finding the best solution is just a matter of arithmetic. But the real-world questions that interest mathematicians and scientists are rarely simple. In 1847, the French mathematician Augustin-Louis Cauchy was working on a suitably complicated example—astronomical calculations—when he pioneered a common method of optimization now known as gradient descent. Most machine learning programs today rely heavily on the technique, and other fields also use it to analyze data and solve engineering problems.

Mathematicians have been perfecting gradient descent for over 150 years, but last month, a study proved that a basic assumption about the technique may be wrong. “There were just several times where I was surprised, [like] my intuition is broken,” said Ben Grimmer, an applied mathematician at Johns Hopkins University and the study’s sole author. His counterintuitive results showed that gradient descent can work nearly three times faster if it breaks a long-accepted rule for how to find the best answer for a given question. While the theoretical advance likely does not apply to the gnarlier problems tackled by machine learning, it has caused researchers to reconsider what they know about the technique.

“It turns out that we did not have full understanding” of the theory behind gradient descent, said Shuvomoy Das Gupta, an optimization researcher at the Massachusetts Institute of Technology. Now, he said, we’re “closer to understanding what gradient descent is doing.”

The technique itself is deceptively simple. It uses something called a cost function, which looks like a smooth, curved line meandering up and down across a graph. For any point on that line, the height represents cost in some way—how much time, energy, or error the operation will incur when tuned to a specific setting. The higher the point, the farther from ideal the system is. Naturally, you want to find the lowest point on this line, where the cost is smallest.

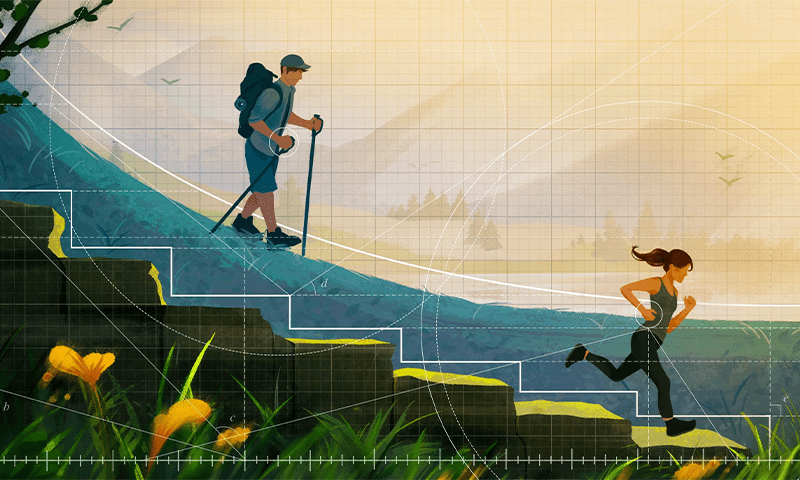

Gradient descent algorithms feel their way to the bottom by picking a point and calculating the slope (or gradient) of the curve around it, then moving in the direction where the slope is steepest. Imagine this as feeling your way down a mountain in the dark. You may not know exactly where to move, how long you’ll have to hike, or how close to sea level you will ultimately get, but if you head down the sharpest descent, you should eventually arrive at the lowest point in the area.

Unlike the metaphorical mountaineer, optimization researchers can program their gradient descent algorithms to take steps of any size. Giant leaps are tempting but also risky, as they could overshoot the answer. Instead, the field’s conventional wisdom for decades has been to take baby steps. In gradient descent equations, this means a step size no bigger than 2, though no one could prove that smaller step sizes were always better.

With advances in computer-aided proof techniques, optimization theorists have begun testing more extreme techniques. In one study, first posted in 2022 and recently published in Mathematical Programming, Das Gupta and others tasked a computer with finding the best step lengths for an algorithm restricted to running only 50 steps—a sort of meta-optimization problem, since it was trying to optimize optimization. They found that the most optimal 50 steps varied significantly in length, with one step in the middle of the sequence reaching nearly to length 37, far above the typical cap of length 2.

The findings suggested that optimization researchers had missed something. Intrigued, Grimmer sought to turn Das Gupta’s numerical results into a more general theorem. To get past an arbitrary cap of 50 steps, Grimmer explored what the optimal step lengths would be for a sequence that could repeat, getting closer to the optimal answer with each repetition. He ran the computer through millions of permutations of step length sequences, helping to find those that converged on the answer the fastest.

Grimmer found that the fastest sequences always had one thing in common: The middle step was always a big one. Its size depended on the number of steps in the repeating sequence. For a three-step sequence, the big step had length 4.9. For a 15-step sequence, the algorithm recommended one step of length 29.7. And for a 127-step sequence, the longest one tested, the big central leap was a whopping 370. At first that sounds like an absurdly large number, Grimmer said, but there were enough total steps to make up for that giant leap, so even if you blew past the bottom, you could still make it back quickly. His paper showed that this sequence can arrive at the optimal point nearly three times faster than it would by taking constant baby steps. “Sometimes, you should really overcommit,” he said.

This cyclical approach represents a different way of thinking of gradient descent, said Aymeric Dieuleveut, an optimization researcher at the École Polytechnique in Palaiseau, France. “This intuition, that I should think not step by step, but as a number of steps consecutively—I think this is something that many people ignore,” he said. “It’s not the way it’s taught.” (Grimmer notes that this reframing was also proposed for a similar class of problems in a 2018 master’s thesis by Jason Altschuler, an optimization researcher now at the University of Pennsylvania.)

However, while these insights may change how researchers think about gradient descent, they likely won’t change how the technique is currently used. Grimmer’s paper focused only on smooth functions, which have no sharp kinks, and convex functions, which are shaped like a bowl and only have one optimal value at the bottom. These kinds of functions are fundamental to theory but less relevant in practice; the optimization programs machine learning researchers use are usually much more complicated. These require versions of gradient descent that have “so many bells and whistles, and so many nuances,” Grimmer said.

Some of these souped-up techniques can go faster than Grimmer’s big-step approach, said Gauthier Gidel, an optimization and machine learning researcher at the University of Montreal. But these techniques come at an additional operational cost, so the hope has been that regular gradient descent could win out with the right combination of step sizes. Unfortunately, the new study’s threefold speedup isn’t enough.

“It shows a marginal improvement,” Gidel said. “But I guess the real question is: Can we really close this gap?”

The results also raise an additional theoretical mystery that has kept Grimmer up at night. Why did the ideal patterns of step sizes all have such a symmetric shape? Not only is the biggest step always smack in the center, but the same pattern appears on either side of it: Keep zooming in and subdividing the sequence, he said, and you get an “almost fractal pattern” of bigger steps surrounded by smaller steps. The repetition suggests an underlying structure governing the best solutions that no one has yet managed to explain. But Grimmer, at least, is hopeful.

“If I can’t crack it, someone else will,” he said.

This article was originally published on the Quanta Abstractions blog.

Lead image: Allison Li/Quanta Magazine