The poet John Donne immortalized the idea that “no man is an island,” but neither, it turns out, are most other species. Many natural and artificial systems are characterized by the collective, from neurons firing in sync and immune cells banding together, to schools of fish and flocks of birds moving in harmony, to new business models and robot designs operating in the absence of a single leader. “Collective systems are more the rule than the exception,” said Albert Kao, a researcher at the Santa Fe Institute who models decision-making in such systems.

Whether biological, technological, economic, or social, collective systems are often considered to be “decentralized,” meaning that they lack a main control hub for coordinating their individual components. Instead, control is distributed among the components, which make their own decisions based on local information; complex behaviors arise through their interactions. That kind of setup can be advantageous, in part because it is resilient: If one part isn’t working properly, the system can continue to function—in stark contrast to when a central brain or leader stops doing its job.

Decentralization has ridden a wave of hype, particularly among those hoping to revolutionize marketplaces with blockchain technology and societies with more dispersed governments. “Some of this stems from political ideology having to do with a preference for bottom-up governing styles and systems with natural checks on the emergence of inequality,” Jessica Flack, an evolutionary biologist and complexity scientist at the Santa Fe Institute, wrote in an email. “And some of it stems from engineering biases … that are based on the assumption these types of structures are more robust, less exploitable.”

But “most of this discussion,” she added, “is naive.” The line between centralization and decentralization is often blurry, and deep questions about the flow and aggregation of information in these networks persist. Even the most basic and intuitive assumptions about them need more scrutiny, because emerging evidence suggests that making networks bigger and making their parts more sophisticated doesn’t always translate to better overall performance.

In a paper published earlier this month in Science Advances, for instance, a team led by Neil Johnson, now a physicist at George Washington University, demonstrated that a decentralized model performed best under Goldilocks conditions, when its parts were neither too simple nor too capable. That finding echoes other results from complexity research about the optimal use of information and the tradeoff between independence and correlation. The new insights could help to point out the strengths and limitations of decentralized designs for robots, self-driving vehicles, medical treatments, and corporate structures—and might even help to explain aspects of natural evolution.

From the Marketplace to the Lab

Johnson’s investigation began as an attempt to understand the feedback loops in financial systems: Traders, each trying to maximize their profits while obeying certain rules, make decisions that contribute to a general outcome—say, some change in stock price—which in turn influences the traders’ subsequent decisions.

Then one day, while he was still on the faculty at the University of Miami, Johnson’s attention was caught by the work of a colleague toiling over something seemingly unrelated to Wall Street: the movements of fly larvae. A larva crawls automatically to positions that are neither too hot nor too cold, but it doesn’t rely on its brain to guide this journey. Instead, each of its body segments responds to signals from temperature-sensing neurons by compressing on one side or the other. The collective movement of all the segments causes the larva to turn. The resulting trajectories toward heat sources reminded Johnson of the financial models he worked with—so he decided to use them to search for principles common to all decentralized systems.

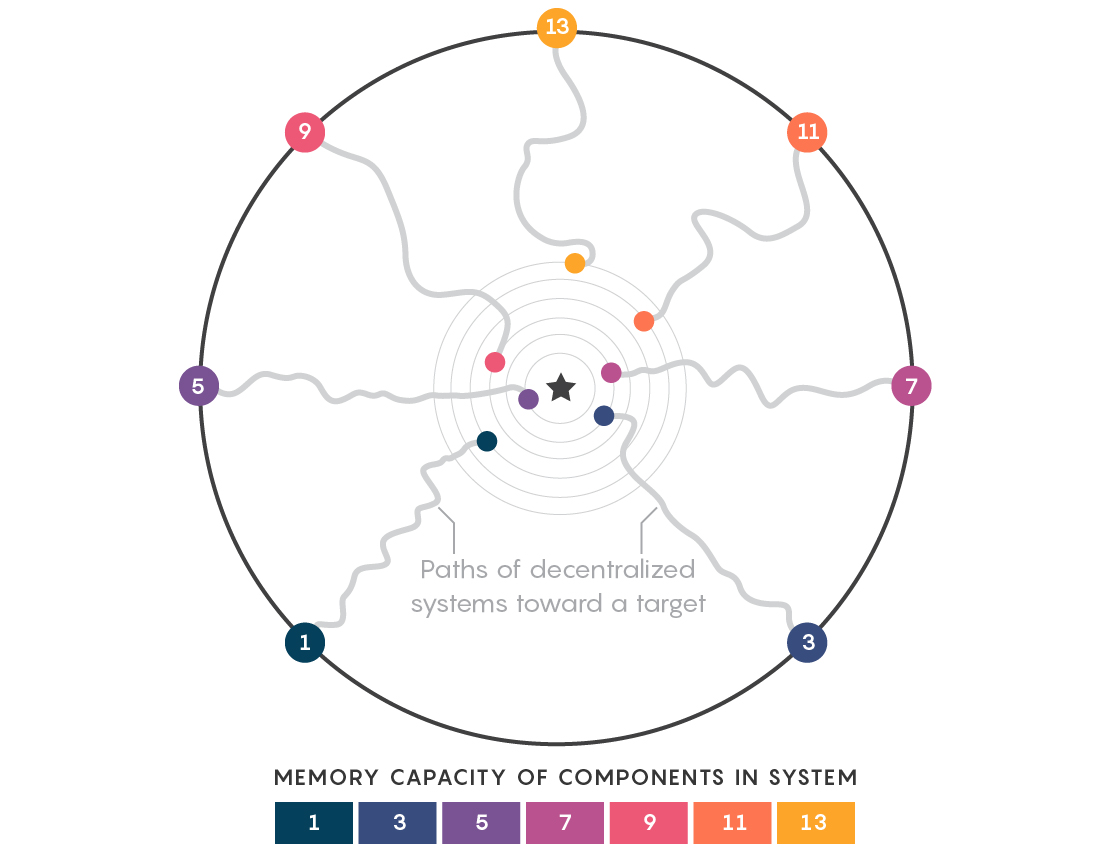

He and his team built a model that mimicked how the larvae behaved. Like the larva’s segments, the collection of agents in the model shared a common goal but had no way to communicate and coordinate their activities. Each agent chose repeatedly to move either left or right, based on whether past decisions had led the entire system to head closer to or farther away from a specified target. To guide its decision, each agent used a strategy drawn from a subset of possibilities assigned to it. If a given strategy performed well, the agent continued to use it; otherwise, it turned to a different one in its arsenal.

The researchers observed that when the agents could remember only one or two outcomes, fewer strategies were possible, so more agents responded in the same way. But because the agents’ actions were then too correlated, the collective movement in the model took it along a zigzagging route that involved many more steps than necessary to reach the target. Conversely, when the agents remembered seven or more past outcomes, they became too uncorrelated: They tended to stick with the same strategy for more rounds, treating a short string of recent negative outcomes as an exception rather than a trend. The model became less agile and more “stubborn,” according to Johnson.

The trajectories were most efficient when the length of the agents’ memory was somewhere in the middle: for about five past events. This number grew slightly as the number of agents increased, but no matter how many agents the model used, there was always a sweet spot—an upper limit on how good their memory could get before the system started to perform poorly.

“It’s counterintuitive,” said Pedro Manrique, a postdoctoral associate at the University of Miami and a co-author of the Science Advances paper. “You would think that improving the sophistication level of the parts, in this case the memory, would improve and improve and improve the performance of the organism as a whole.”

A Second Wave

Kao sees a striking connection between Johnson’s and Manrique’s findings and his own work on the behavior of crowds. Over the past few years, he and others have found that medium-size groups of animals or humans are optimal for decision-making. That conclusion runs contrary to the standard beliefs about the “wisdom of crowds,” Kao said, “where the larger the group, the better the collective performance.” Success lies in achieving the right balance between coordination and independence among the system’s components.

“It’s like a second wave of this kind of research,” Kao said. “The first wave was naive enthusiasm for these collective systems. Now, it feels like … we’re questioning a lot of the assumptions we made initially and finding more complex behaviors.”

Further research is needed on how the sophistication of components, their interconnectivity, and other parameters affect the overall robustness and limitations of a network. Johnson and others plan to study how the availability of information affects phenomena as diverse as the formation of opinions among voters, the behavior of better robots, and potential mechanisms of recovery from neurological diseases. They also hope the work could help explain why natural evolution has made organisms a mix of centralized and decentralized systems (these kinds of results, Johnson said, could help to justify “why we aren’t just fantastic larvae”).

Ultimately, complexity in nature isn’t simply a story of its emergence from entirely uncorrelated groups of dumb parts—and probing that story could one day yield more universal principles about cooperation, coordination, and collective information processing.

Lead image: This swooping murmuration of starlings, photographed in Spain in 2018, moves as though the hundreds of birds were being centrally directed, but each one makes its own choices. Research has found that adding intelligence to the individual agents in decentralized systems doesn’t always make their collective behaviors more complex. Credit: ©Joaquin