So far this year, Quanta Magazine has chronicled three major advances in Ramsey theory, the study of how to avoid creating mathematical patterns. The first result put a new cap on how big a set of integers can be without containing three evenly spaced numbers, like {2, 4, 6} or {21, 31, 41}. The second and third similarly put new bounds on the size of networks without clusters of points that are either all connected, or all isolated from each other.

The proofs address what happens as the numbers involved grow infinitely large. Paradoxically, this can sometimes be easier than dealing with pesky real-world quantities.

For example, consider two questions about a fraction with a really big denominator. You might ask what the decimal expansion of, say, 1/42503312127361 is. Or you could ask if this number will get closer to zero as the denominator grows. The first question is a specific question about a real-world quantity, and it’s harder to calculate than the second, which asks how the quantity 1/n will “asymptotically” change as n grows. (It gets closer and closer to 0.)

“This is a problem plaguing all of Ramsey theory,” said William Gasarch, a computer scientist at the University of Maryland. “Ramsey theory is known for having asymptotically very nice results.” But analyzing numbers that are smaller than infinity requires an entirely different mathematical toolbox.

Gasarch has studied questions in Ramsey theory involving finite numbers that are too big for the problem to be solved by brute force. In one project, he took on the finite version of the first of this year’s breakthroughs—a February paper by Zander Kelley, a graduate student at the University of Illinois, Urbana-Champaign, and Raghu Meka of the University of California, Los Angeles. Kelley and Meka found a new upper bound on how many integers between 1 and N you can put into a set while avoiding three-term progressions, or patterns of evenly spaced numbers.

Though Kelley and Meka’s result applies even if N is relatively small, it doesn’t give a particularly useful bound in that case. For very small values of N, you’re better off sticking to very simple methods. If N is, say, 5, just look at all the possible sets of numbers between 1 and N, and pick out the biggest progression-free one: {1, 2, 4, 5}.

But the number of different possible answers grows very quickly and makes it too difficult to employ such a simple strategy. There are more than 1 million sets consisting of numbers between 1 and 20. There are over 1060 using numbers between 1 and 200. Finding the best progression-free set for these cases takes a hefty dose of computing power, even with efficiency-improving strategies. “You need to be able to squeeze a lot of performance out of things,” said James Glenn, a computer scientist at Yale University. In 2008, Gasarch, Glenn, and Clyde Kruskal of the University of Maryland wrote a program to find the biggest progression-free sets up to an N of 187. (Previous work had gotten the answers up to 150, as well as for 157.) Despite a roster of tricks, their program took months to finish, Glenn said.

To lessen their computational load, the team used simple tests that prevented their program from pursuing dead-end searches and split their sets into smaller parts that they analyzed separately.

Gasarch, Glenn, and Kruskal also tried several other strategies. One promising idea leaned on randomness. A simple way to come up with a progression-free set is to put 1 in your set, then always add the next number that doesn’t create an arithmetic progression. Follow this procedure until you hit the number 10, and you’ll get the set {1, 2, 4, 5, 10}. But it turns out this isn’t the best strategy in general. “What if we don’t start at 1?” Gasarch said. “If you start at a random place, you actually do better.” Researchers have no idea why randomness is so useful, he added.

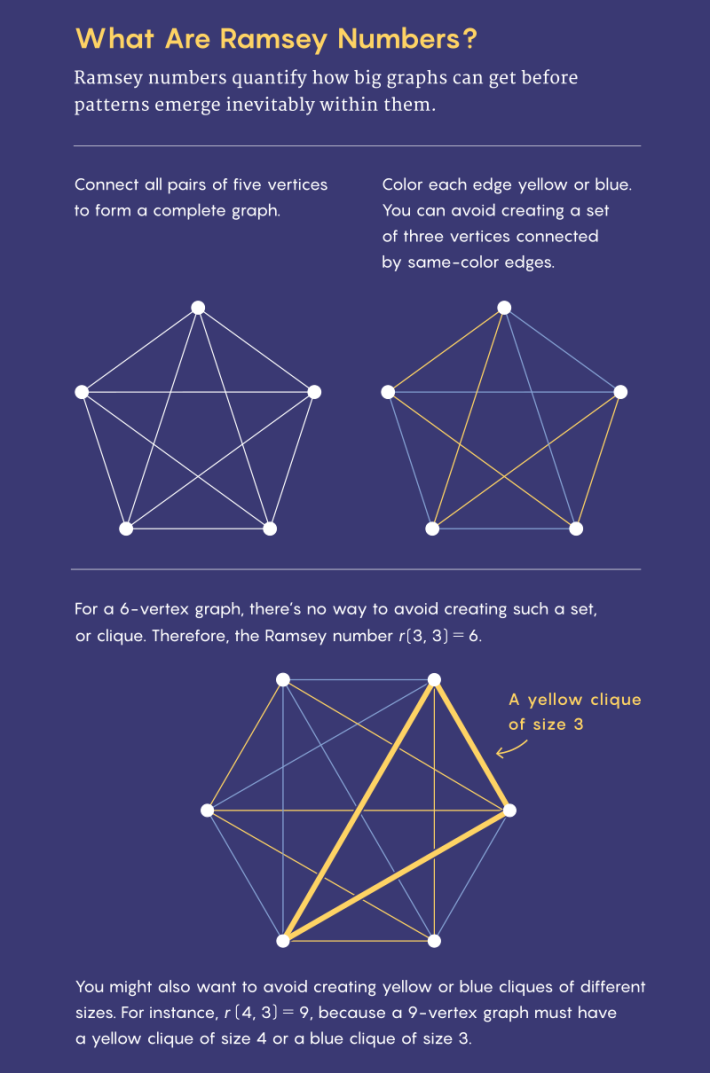

Calculating the finite versions of the two other new Ramsey theory results is even more vexing than determining the size of progression-free sets. Those results concern mathematical networks (called graphs) made up of nodes connected by lines called edges. The Ramsey number r(s, t) is the smallest number of nodes a graph must have before it becomes impossible to avoid including either a group of s connected nodes or t disconnected ones. The Ramsey number is such a headache to compute that even r(5, 5) is unknown—it’s somewhere between 43 and 48.

In 1981, Brendan McKay, now a computer scientist at Australian National University, wrote a software program called nauty, which was intended to make calculating Ramsey numbers simpler. Nauty ensures that researchers don’t waste time checking two graphs that are just flipped or rotated versions of one another. “If somebody’s in the area and is not using nauty, the game is over. You must use it,” said Stanisław Radziszowski, a mathematician at the Rochester Institute of Technology. Still, the amount of computation involved is almost incomprehensible. In 2013, Radziszowski and Jan Goedgebeur proved that r(3, 10) is at most 42. “It took, I think, almost 50 CPU years,” said Goedgebeur, a computer scientist at KU Leuven University in Belgium.

If you can’t compute an exact Ramsey number, you can try narrowing down its value with examples. If you found a 45-node graph without five nodes that were all connected and without five nodes that were all disconnected, that would prove that r(5, 5) is bigger than 45. Mathematicians studying Ramsey numbers used to think that finding those examples, called Ramsey graphs, would be simple, Radziszowski said. But it wasn’t so. “There was this expectation that nice, cool mathematical constructions will give the best possible constructions, and we just need more people to work on it,” he said. “My feeling is more and more that it’s chaotic.”

Randomness is both an obstacle to understanding and a useful tool. Geoffrey Exoo, a computer scientist at Indiana State University, has spent years refining random methods to generate Ramsey graphs. In a 2015 paper announcing dozens of new, record-beating Ramsey graphs, Exoo and Milos Tatarevic generated random graphs and then gradually tweaked them by deleting or adding edges that reduced the number of unwanted clusters until they found a Ramsey graph. Exoo’s techniques are as much an art as anything, though, Radziszowski said. They sometimes require him to combine multiple methods, or to use judgment about what kind of graphs to start with. “Many, many people try it, and they cannot do it,” Radziszowski said.

The techniques developed to generate Ramsey graphs could be more broadly useful someday, said Goedgebeur, who has worked on producing other kinds of graphs, such as graphs that represent chemical compounds. “It is not unlikely that these techniques can also be transferred and adjusted to help generate other classes of graphs more efficiently (and vice versa),” he wrote in an email.

To Radziszowski, however, the reason for studying the small Ramsey numbers is much simpler. “Because it’s open, because nobody knows what the answer is,” he said. “The trivial cases we do by hand; a little larger, you need a computer, and a little larger, even the computer is not good enough. And so the challenge emerges.”

This article was originally published on the Quanta Abstractions blog.

Lead image : Kristina Armitage/Quanta Magazine