The 1980s at the MIT Computer Science and Artificial Intelligence Laboratory seemed to outsiders like a golden age, but inside, David Chapman could already see that winter was coming. As a member of the lab, Chapman was the first researcher to apply the mathematics of computational complexity theory to robot planning and to show mathematically that there could be no feasible, general method of enabling AIs to plan for all contingencies. He concluded that while human-level AI might be possible in principle, none of the available approaches had much hope of achieving it.

In 1990, Chapman wrote a widely circulated research proposal suggesting that researchers take a fresh approach and attempt a different kind of challenge: teaching a robot how to dance. Dancing, wrote Chapman, was an important model because “there’s no goal to be achieved. You can’t win or lose. It’s not a problem to be solved…. Dancing is paradigmatically a process of interaction.” Dancing robots would require a sharp change in practical priorities for AI researchers, whose techniques were built around tasks, like chess, with a rigid structure and unambiguous end goals. The difficulty of creating dancing robots would also require an even deeper change in our assumptions about what characterizes intelligence.

Chapman now writes about the practical implications of philosophy and cognitive science. In a recent conversation with Nautilus, he spoke about the importance of imitation and apprenticeship, the limits of formal rationality, and why robots aren’t making your breakfast.

What makes a dancing robot interesting?

Human learning is always social, embodied, and occurs in specific practical situations. Mostly, you don’t learn to dance by reading a book or by doing experiments in a laboratory. You learn it by dancing with people who are more skilled than you.

Imitation and apprenticeship are the main ways people learn. We tend to overlook that because classroom instruction has become newly important in the past century, and so more salient.

I aimed to shift emphasis from learning toward development. “Learning” implies completion: once you have learned something, you are done. “Development” is an ongoing, open-ended process. There is no final exam in dancing, after which you stop learning.

That was quite a shift from how AI researchers traditionally approached learning, wasn’t it?

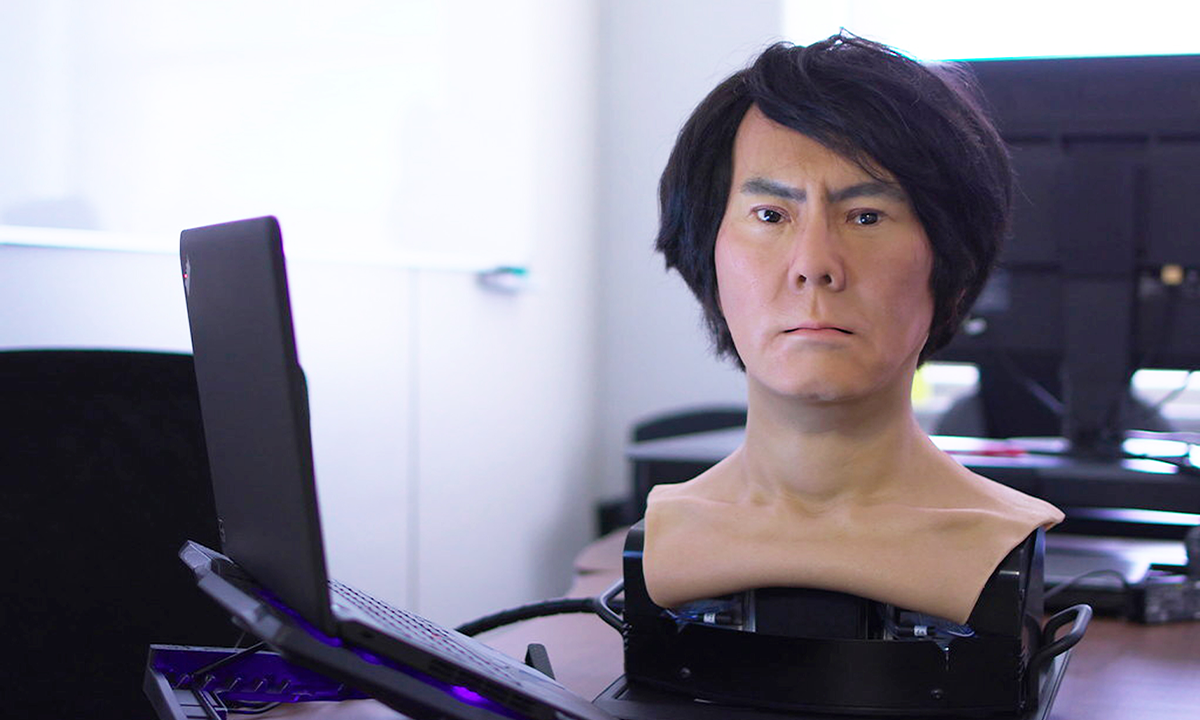

Yes. In its first few decades, artificial intelligence research concentrated on tasks we consider particular signs of intelligence because they are difficult for people: chess, for example. It turned out that chess is easy for fast-enough computers. Early work neglected tasks that are easy for people: making breakfast, for instance. Such easy tasks turned out to be difficult for computers controlling robots.

Early AI learning research also looked at formal, chess-like problems, where we could ignore bodies and the social and practical contexts. Recent research has made impressive progress on practical, real-world problems, like visual recognition. But it still makes no use of the social and bodily resources that are critical to human learning.

What can Heidegger can teach us about intelligence and learning?

Formal rationality—as used in science, engineering, and mathematics—has propelled dramatic improvements in our lives over the past few centuries. It’s natural to take it as the essence of intelligence, and then to suppose that it underlies all human activity. For decades, analytic philosophers, cognitive psychologists, and AI researchers accepted without question that you act by first constructing a rational plan using logic, and then execute the plan. In the mid-1980s, though, it became apparent that this is usually impossible, for technical reasons.

The philosopher Hubert Dreyfus anticipated this technical impasse by more than a decade in his book What Computers Can’t Do. He drew on Heidegger’s analysis of routine practical activities, such as making breakfast. Such embodied skills do not seem to involve formal rationality. Furthermore, our ability to engage in formal reasoning relies on our ability to engage in practical, informal, and embodied activities—not vice versa. Cognitive science had it exactly backward! Heidegger suggested most of life is like breakfast, and very unlike chess.

Heidegger suggested most of life is like breakfast, and very unlike chess.

My colleague Phil Agre and I developed new, interactive computational approaches to practical action that did not involve formal reasoning, and demonstrated that they could be much more effective than the traditional logical paradigm. Our systems had to be programmed manually, however, which seemed infeasible for tasks more difficult than playing a video game. AI systems that develop skills without being programmed explicitly would have to be the next step.

Heidegger didn’t have much to say about learning, but his insight that human activity is always social was an important clue. Phil and I took inspiration from several schools of anthropology, sociology, and social and developmental psychology (some of which had, in turn, been inspired partly by Heidegger). We began working toward a computational theory of learning by apprenticeship. “Robots That Dance” sketched parts of that. Shortly thereafter, we realized that turning these ideas into working programs was not yet feasible.

Building a physically embodied robot adds a lot of challenges—such as keeping the robot from falling over—that seem tangential to learning and intelligence. Why not start instead with a “dancing robot” as an animation on a screen?

One difficulty for the rationalist approach to AI is that we can never make an entirely accurate model of the real world. It’s just too messy. A spoonful of blueberry jam has no particular shape. It’s sticky and squishy and somewhat runny. It’s not uniform; partially squashed berries don’t behave the same way as the more liquid bits. At the atomic level, it conforms to the laws of physics, but trying to make breakfast using that is not practical.

Our bodies are the same. Muscles are bags of jelly with stretchy strings in them. Bones are irregular shapes attached with elastic tendons, so joints exhibit complex patterns of “give” as they approach their limits.

Using physical simulation, you can make an animated human figure dance on the screen. It might look impressively realistic to the eye. However, these methods do not work well enough for robots to do most ordinary human tasks. Dancing and making breakfast are still well beyond the state of the art.

Physical simulation doesn’t work well because robot bodies, like human ones, are imperfect. Most current designs try to make robots conform to simple physical models, by making them as rigid and strong and precisely machined as possible. Even so, they have some flexibility and limitations and irregularity, which make them difficult to control. Also, they have to be enormously heavy and powerful, which makes them dangerous and inefficient.

In “Robots That Dance,” I suggested abandoning this approach, and instead using machine learning methods to find ways of controlling a lighter, weaker, more flexible robot. Like a child, the system would gradually develop physical skill through experience. We didn’t have enough computer power to do that then, but some researchers have found success with this approach recently.

This seems to be a recurring theme in your work: We want the world to be rigid and absolute, whereas in fact it’s complex and non-uniform.

Yes. My recent work on “meaningness” suggests working with the interplay of ambiguity and pattern to enhance understanding and action. It’s “practical philosophy” for personal effectiveness, drawing on work I did in AI, and the academic fields I mentioned earlier. It has a learning dimension, too. Research on adult development shows that people may progress through pre-rational, rational, and meta-rational ways of understanding. The middle stage is overly rigid. It imagines that the world can be made to conform to systems. That can become heavy-handed, inefficient, and brittle.

If the barrier to perfectly accurate models is fundamental, rather than merely technological, does that demand a completely different approach to AI?

The dominant approach of the 1970s and 1980s did definitively fail, for that reason. “Deep learning,” which has had some spectacular recent successes, is more flexible. It builds models that are statistical and implicit, rather than absolute and logical. However, this requires enormous quantities of data, whereas people often learn from a single example. It will be fascinating to discover the scope and limitations of the deep learning approach.

Uri Bram is the author of Thinking Statistically, an informal primer on the big ideas from statistics. Follow him on Twitter @UriBram.

Lead photograph courtesy of Wikicommons.