Our relationship to technology and the benefits we reap from it depends on how much we make it our own.

This realization has motivated me to contextualize the drumbeat we hear about the perils of technology, particularly social media: increased isolation, difficulty empathizing, and impaired conversational skills. Rather than regarding technology as an external force or temptation that we have to struggle against, I propose thinking about the alliances that we form with technology. This alliance begins when we acquire or access something, perhaps a new device, service, or data, and evolves as the technology challenges us and we challenge it. We bring the technology into social situations it wasn’t designed for. We draw on it to negotiate the limitations that we see in ourselves. In exploring new applications for it, we find new perspectives on ourselves and our social worlds.

The commonality across the three stories presented here is individuals’ adaptation of technology to meet their own objectives. Their significance is personal and interpersonal. For readers who work in technology development, perhaps these examples will inspire designs that reflect how people see themselves over time, or how they construct the significant relational and emotional themes in their lives. Too often tech designs focus on discrete tasks rather than taking into account the intricate, evolving self that each user brings to those tasks.

These stories invite us to reflect on our lives and our devices—that is, to engage them as supportive allies in our quest for connectedness and well-being.

Connected Lights

Elana is not a gadget person. She has minimalist sensibilities and wouldn’t normally spruce up her home with networked appliances, but allowed her boyfriend, David, to install smart lights. David lived 400 miles away from Elana—a separation required by their jobs. The distance was a major source of tension in their relationship. He often got upset about it and refused to talk by phone. She, in turn, worried about him and their relationship.

So when she came home one day to find her apartment lit up in violet and blue, she was reassured. David had access to her connected lights, and this was a message from him. She felt loved despite the tensions.

Light has always brought people together.

To enable this remote control of lights, David had linked his phone to Elana’s lights during a previous visit when they’d set up the lights. They each wanted to be able to control the lights in each place while there or away. When David wanted to adjust Elana’s lights from afar, he signed into her account and selected colors for specific lights. He knew approximately when she’d be arriving home and could change the lights from any location. This involved some challenges, particularly disambiguating the accounts after adjusting lights in one another’s homes. Sometimes Elana couldn’t reestablish control over her lights from within the app and had to either text David to turn them off or physically unplug the lights when she wanted to sleep. Nonetheless, the light exchanges became an important part of their long-distance relationship.

How could turning on lights come to mean so much? In part it is because we have a long history with light. We associate it with the warmth of fire. As Lisa Heschong writes in Thermal Delight in Architecture, “Are the colors reds and browns? Then maybe it will be warm like a room lit by the red-gold light of a fire.”1 We associate fire with interpersonal as well as physical warmth. Light has always brought people together.

And we have long used light as a signal. Signaling theory tells us that organisms have evolved so that everything from the colors of a butterfly’s wings to a human’s casual gestures can convey essential information. Take the male goldfinch’ plumage, which transforms to a vibrant yellow in mating season. The bright colors, which reflect carotenoids and immune functioning, are an “honest signal” of health to potential mates.2 From Microsoft research comes a contemporary version: makeup that changes color to signal changes in air quality. Eyelids that turn from silver to black, or lips that go from pink to white, may warn passerby that this is not the place to take a run, or may provoke someone to confront a smoker. Collectively, these skin signals could function as a protest against pollution and the depletion of the environment.3

Signaling theory is often concerned with deceptive signals: animal markings such as “eye spots” that confuse predators, or for a human example, the comb-over. But Elana and David were using lights, which have nothing intrinsic to do with love, to convey a truth. And these messages, unlike a text, don’t divert their attention to a phone; they are immersive.

The medium need not be limited to light. Many familiar objects have been explored as channels for intimate communication—clothing that “hugs” over a distance, teacups that glow when picked up at the same time, or a mattress heated in the spot of a faraway significant other.4,5,6,7 Recent work on “ghosting” took this a step further, synchronizing the lights and sounds in two homes.8 Body-to-body connections have also been prototyped; for example, through actuators that move an individual’s arms in gestures according to the mental state of a long-distance romantic partner, as measured by an EEG.9 These examples involve passive sharing of sensed behaviors and biometrics, but it may be more powerful to actively create experiences as a form of gift giving. There will doubtlessly be more immediate and compelling ways to play music for others over a distance or immerse someone else in what you are seeing. I suspect connected devices will also support more tangible forms of expression, allowing us to make a friend a cup of tea or even dinner from far away.

The Mood Phone

Tobias, an anxious family man with a demanding job, had struggled to communicate with his wife for years, and the distance between them seemed to be growing. They had different lives and swapped rather than shared what they had previously created together: their children and home. At precisely timed schedules, they exchanged responsibilities and locations. In our first conversation, Tobias focused on his own feelings of resentment.

I met Tobias because he volunteered to try the Mood Phone1, an app that my colleagues and I designed to help with stress.10 I talked with him every week throughout the month-long study. One element of the app, a Mood Map, appeared on the phone every hour. It asked him to indicate how he was feeling at that moment (that is, how energetic and how positive he felt) by dragging a dot to a spot on a two-by-two matrix. Based on his current mood, the app launched other scales and therapeutic exercises, including breathing visualizations and cognitive reappraisal prompts.

To have a lot of value, our devices really need our input.

Tobias noticed a pattern in the data: His mood dropped every night when he came home from work and typically remained low the whole evening. The transition home was rough; as soon as he walked in the door, his wife rushed out to the gym, the dog jumped on him, and the kids demanded his attention and dinner. The daily pattern contributed to his resentment.

Over time, his reflection shifted from resentment to curiosity. He became interested in how his wife was feeling. Tobias wanted a way to ease into more empathic conversations, but worried that directly asking her “How are you feeling?” would come across as a confrontation. He imagined her firing back, “Why are you asking?”

If his wife also had the mood tracking app, and if their phones could exchange information about their moods, he thought, maybe they could use their shared data to open a conversation more naturally. Their phones weren’t able to sync in this way, but his curiosity alone helped him open some of these conversations.

To have a lot of value, our devices really need our input. Devices just display a best guess based on data that is often sparse, sensitive, and non-specific. The endgame is not having a device that is smart enough to tell us how we are feeling. Our emotions are different from things like temperature or blood glucose level, which can be measured independently of how we experience and describe them. As explained by Lisa Feldman Barrett, a professor of psychology at Northeastern University, emotions take form as we interpret events and our physiological states.11 The richer the repertoire of emotional concepts we have to draw on, the more precisely we can name our feelings. This articulation shapes our experience of the world: The more precisely we can label a challenge, the more easily we can respond. Feeling “bad” differs from articulating “righteous indignation,” Feldman Barrett points out; the latter is more likely to propel one into action.12 “Emotional granularity” creates more options for understanding and reacting to challenges. This ability to finely articulate emotions will likely also help us understand and relate to others.

An app isn’t a conversation substitute. But it can help open the conversations that we want to have. Tobias tracked his moods, noticed a pattern, and wondered about his wife’s moods. This prompted him to initiate different kinds of conversations with her and express interest in how she was feeling. Their dialogue improved. He wasn’t alone in finding this kind of benefit. The participants who described the most benefit from this mobile intervention were those who brought it into their conversations—with family, spouses, and in some cases colleagues. With emotional tracking data, some may find value in sharing with a spouse or another close tie, while others may benefit from relating to peers, perhaps those with a common concern or demographic. When there is an opportunity for this kind of sharing, connection will come from trying to understand the nuances in the other person’s experiences.

The Social Solar System

In tracking another person, we sometimes learn about ourselves. Reflecting on data about her mother’s social isolation, Natasha saw a need to change her own life.

Sandwiched between the needs of her 85-year-old mother, her children, and their children, Natasha felt depleted. She was mostly worried about her mother, Mallory, whom she knew must be lonely. Mallory enjoyed spending time with others and seemed to want more contact, especially with Natasha and her family, but she was never the one to make it happen. Her husband had been the social coordinator in their relationship, and her friends were people they had socialized with as a couple. Mallory moved across the country to live near Natasha after her husband died, becoming more cut off from her few friends and extremely reliant on Natasha. Unless Natasha arranged it, her mother saw no one. This passivity was not just a burden for Natasha; it also concerned her. Although she had some worries about her mom’s physical health, more than anything she wanted her mom to be actively engaged with other people. Mallory’s concerns are consistent with growing evidence that loneliness is a serious matter, reducing life satisfaction and increasing the risk of cardiovascular disease, dementia, and other illnesses.13 And it’s not just being around people that would help someone like Mallory. To feel connected, she would need to reach out to others and participate.

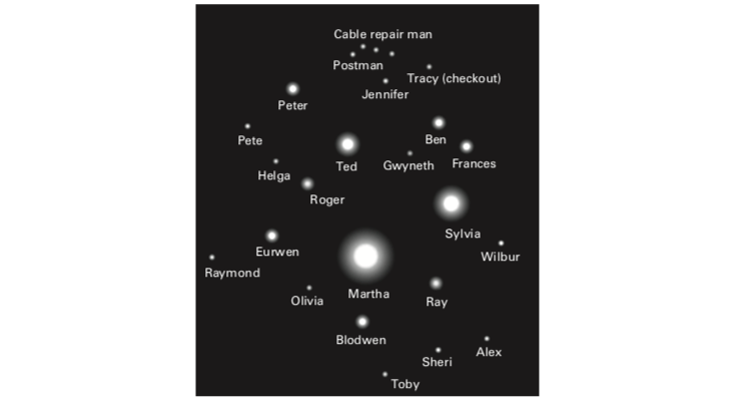

A project that my colleagues and I had been working on fit the bill. We had designed a system that tracked as much of an individual’s social activity as it could, including face-to-face interactions, phone calls, and emails. It then presented that data as a solar system, with people shown as planets circling the sun of the central figure.14 The distance of each planet from the sun represented how much contact each family member had been having with the older adult represented as the central figure. The hardest part of this technically was gathering the data. But the visual representation of that data as a solar system turned out to be what mattered, in some unexpected ways.

The solar system metaphor allowed Natasha to monitor and make sense of her mother’s social situation, moment by moment, over the months that they used this system. Perhaps more valuable was that it gave her a vocabulary and external reference point to talk with her mother about loneliness. It showed her mother as a circle at some distance from others, except Natasha. Natasha discussed this objective data, sometimes using the celestial metaphor, as a way of sharing her concerns. And she drew on the data in our interviews to sympathize with her mom’s isolation and even her reluctance to invest in new friendships. It must be, she said, “like being on an island, when everyone else you’ve known and loved has died.” She struggled to imagine how hard this was.

Unexpectedly, Natasha also used this solar display as a sort of mirror rather than just a way to check in on her mother. She saw that week after week, she remained at the center of her mother’s social life. Hers was the only circle close to her mom’s. Her central position in the display of her mother’s world reflected her dutiful caregiving and hinted at all that she had been neglecting. Up until then, it was as if she had been so close to the center that she was blinded. By stepping back and seeing her situation objectively, she could start to examine the rest of her life. She resolved to draw in other family members as caregivers for her mom so that she could allocate more of her time to her other relationships and interests.

Data by itself does not tell the story. Natasha had to see that data expressed in terms that made visually clear the extent to which she was at the center of her mother’s universe. The metaphorical display let Natasha back up just enough to see that she needed to invite other “planets”—her siblings and children—to bring their orbits closer to her mother and step in as caregivers. This shift in conversation and caregiving dynamics even sparked a change in her mother’s passivity: Mallory started initiating plans with her family and even volunteering.

For data to make a difference in our lives, we have to make it our own. But for many of us, this involves seeing the data and ourselves as more than numbers. The image of planets alone in space, circling an isolated object, worked for Natasha and Mallory where mere data would not have.

Margaret E. Morris is a clinical psychologist, researcher, and creator of technologies to support well-being. A senior research scientist at Intel from 2002 to 2016, she has conducted user experience research at Amazon and is an affiliate faculty member in the Department of Human-Centered Design and Engineering at the University of Washington.

Excerpted from Left to Our Own Devices: Outsmarting Smart Technology to Reclaim Our Relationships, Health, and Focus by Margaret E. Morris; © 2018 MIT Press.

References

1. Heschong, L. Thermal Delight in Architecture MIT Press, Cambridge, MA (1979).

2. David Sibley, The Annual Plumage Cycle of a Male American Goldfinch. Sibley Guides (2012).

3. Hsin-Liu Kao, C., Roseway, A., Nguyen, B., & Dickey, M. Earthtones: Chemical sensing powders to detect and display environmental hazards through color variation. Proceedings of the 2017 CHI Conference Extended Abstracts on Human Factors in Computing Systems New York: ACM, 872–883 (2017).

4. The HugShirt. CUTECIRCUIT https://cutecircuit.com (2014).

5. Chung, H., Lee, C.J. & Selker, T. Lover’s cups: Drinking interfaces as new communication channels. CHI ’06 Extended Abstracts on Human Factors in Computing Systems New York: ACM, 375–380 (2006).

6. Goodman, E. & Misilim, M. The sensing bed. Proceedings of UbiComp 2003 Springer, London (2003).

7. Bell, G., Brooke, T., Churchill, E., & Paulos, E. Intimate ubiquitous computing. Proceedings of Ubicomp 2003 New York: ACM, 3–6 (2003).

8. Clark, M. & Dutta, P. The haunted house: Networking smart homes to enable casual long-distance social interactions. Proceedings of the 2015 International Workshop on Internet of Things toward Applications New York: ACM (2015).

9. Hassib, M., Pfeiffer, M., Schneegass, S., Rohs, M., & Alt, F. Emotion actuator: Embodied emotional feedback through electroencephalography and electrical muscle stimulation. Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems New York: ACM, 6133–6146 (2017).

10. Morris, M.E., et al. Mobile therapy: Case study evaluations of a cell phone application for emotional self-awareness. Journal of Medical Internet Research 12, e10 (2010).

11. Feldman Barrett, L. How Emotions Are Made: The Secret Life of the Brain Houghton Mifflin Harcourt, Boston (2017).

12. Feldman Barrett, L. Are You in Despair? That’s Good. The New York Times (2016).

13. Hawkley L.C. & Cacioppo, J.T. Loneliness matters: A theoretical and empirical review of consequences and mechanisms. Annals of Behavioral Medicine: A Publication of the Society of Behavioral Medicine 40, 218–227 (2010).

14. Morris, M.E. Social networks as health feedback displays. IEEE Internet Computing 9, 29–37 (2005).