About a year ago, I was a member of a Facebook group about a relatively obscure part of computer science called “procedural generation.” People would mostly write their own programs that created interesting visuals. Then, in early 2022, a program called Disco Diffusion was released, and all hell broke loose. It allowed anybody to create breathtaking images just by typing in a description, called a “prompt.” Soon the internet was awash with artificial intelligence-generated art, with a new AI image-generation program seeming to be released every few weeks. From DALL-E 2 to Midjourney, easy-interface programs were quickly accessible to anyone with an internet connection.

This line of reasoning is based on creative destruction.

People were so excited about these programs because the output was gorgeous and anybody could use them. As a cognitive scientist long interested in creating visualization models with AI that unpack how the mind processes information, I’ve been playing with these programs, and I can attest to the democratizing effect. I’ve formed hundreds of images, almost entirely for the pleasure of simply looking at them. Once, I made a series of paintings based on Pac-Man that I was pretty proud of. I even sold most of them. Then I asked Midjourney to create an image of a “demonic Pac-Man.” In less than a minute, it produced four images better than probably anything I will ever create myself.

The easy-to-use image programs, and the attractive results, have upended the art world. Professional artists are worried about losing work to anybody sitting at a computer who can manage a few prompts. Already, artists working for gaming companies are reporting that smaller shops have stopped hiring as many people for their art needs. Illustrators are watching in anger as publications bypass them for AI-generated images. I sympathize with artists. It must be depressing and frightening, after spending decades building skills, to face a machine that can do aspects of your job at a fraction of the cost. At the same time, the democratizing forces of these technologies can make a richer art environment for all of us.

This line of reasoning is based on the economic concept of creative destruction. This idea holds that economic models and ways of doing business have to be destroyed to allow for better, more efficient, and productive models. Photography is a striking example. As camera technology improved, thousands of jobs were lost in areas of film development, film and camera manufacturing, and even professional photography. Now that cell phone cameras are so good, people are buying fewer devices that only take pictures. The images aren’t just cheaper; we also have a lot more of them. Childhood now is so much better documented than it was for our grandparents. The photos and videos are windows into the worlds of our past; children of today will have hours of video. I certainly wish I had more photos of me and my family in the 1970s.

AI generators can enrich the art world in other ways. For example, most published novels have no illustrations, except perhaps one on the cover, even though illustrations often become adored parts of books. Exactly why isn’t clear. Some say that novels lost their illustrations to differentiate themselves from children’s literature and pulp entertainment. Another possibility is economic: Illustrations are an extra cost. One estimate is that fully illustrating a book can cost up to $10,000. Many books lose money, and the added value of the illustrations might not be enough to sufficiently boost book sales to make it worth it. Art generated by inexpensive image tools could allow more books to be lavishly illustrated in the future. A single novel might have several editions, each with a different art style to its illustrations. Maybe the art will inspire people to read and buy more books. Art generators can allow people to have more original art on their walls, rather than printed reproductions of other works. Looking ahead to further technological improvements, maybe we’ll have more movies. Perhaps they’ll be custom-made for whoever’s watching them.

It’s true that AI creates a picture in a substantially different way from the way humans do. Diffusion models—such as Disco Diffusion, Stable Diffusion, and Midjourney—start with random noise and, over time, change the noise to look more and more like what it “thinks” an image should look like, given the prompt. Humans, on the other hand, usually start with a high-level idea of what the elements of the picture will be, what perspective it will take, and so on, and then execute the details. But does this difference matter to the audience?

I can attest to the democratizing effect of AI art.

When we look at how cognitive scientists understand how images affect viewers, we can appreciate that images generated by artificial intelligence don’t fundamentally change our relationship with art. Audiences can indeed have an artistic experience in the presence of things that aren’t art, such as beautiful sunsets and true stories. There seems to be little doubt that when you find a sunset beautiful you are using much the same psychological machinery as you do when you find a painting of a sunset beautiful. Viewed through this lens, we can also have artistic experiences when we look at AI-generated works, whether these works are considered art or not.

The debates about whether these outputs count as art are endless because what makes something qualify as a work of art is multifaceted, and people arguing one way or the other tend to focus on different facets of what art is. Similar arguments about art status arose with photography and movies, and continue to today with video games. It’s noteworthy that automated art generators—which have been around for decades—only came to the fore of the debate when their output became indistinguishable from those of human artists. Recently an artist spent days making a painting that people accused him of using AI to create. It’s worth noting some artists are also using art generators to create and enhance their visions.

But some argue that AI-generated work is missing the communication between the artist and the audience. The part of the artistic experience that involves the audience interpreting the mind of the artist is missing (or, at the very least, is very different) when viewing AI works. Sometimes, when we look at art, we like to try to interpret the message the artist is sending us, or we reflect on their view of the world, what the art says about what the artist has experienced. The way AI draws inspiration from extant art is different from the way humans do. Humans are influenced by other artists while bringing their own creativity to their work, which to some degree reflects their life experiences. For current AI art generators, their only experience, if you want to call it “experience” at all, consists of only images made by humans. They have not lived their own lives, out in the world. AI art tools cannot bring their real-life experience to their art because they don’t have any.

How important is this aspect of art to the audience? It depends on the audience. Filmmaker Guillermo del Toro thinks it’s so important that he stated in a Decider interview, “I consume and love art made by humans. I am completely moved by that. And I am not interested in illustrations made by machines and the extrapolation of information.” But for some people this isn’t important at all. When I go to an art gallery, I don’t want to know anything about the artist or how they made their work. Often, I feel that knowing about artists, their lives and cultures, how they created the art and why, and so on, distracts me from the art itself. I want to appreciate the art for what it is.

You can have an artistic experience in the presence of things that aren’t art.

You can look at a painting and try to infer what the artist was trying to say, even if your conclusions are way off base. That’s part of what the audience brings to a work of art. One of the earliest AI art programs, AARON, was created by professional painter Harold Cohen, who explicitly programmed his artistic sensibilities into the code, such as notions of the anatomy of people and the structure of plants, rules of composition, and color theory. AARON created paintings of people, among other things, that were beautiful, and, crucially, appeared sometimes to have real feeling behind them. Of course AARON had no programming for human psychology. Nevertheless, when you look at its paintings, it’s very easy to read psychological relationships between the people depicted.

Whether these audience interpretations are correct in these inferences is of debatable importance, and this is true even when consuming the work of human artists. Art critics generate multiple, contradictory interpretations of the artist’s intent. The documentary Room 237 describes several fascinating, completely incompatible interpretations of Stanley Kubrick’s film The Shining. They can’t all be right, but these interpretations are an important part of appreciation, regardless of their accuracy. AI does not need to understand human relationships to create works that we can appreciate in terms of human relationships: The viewer does that work.

In a time when we can’t tell whether images (or text, or music) were made by a human or not, we will still read human psychology and motivation into them. We can’t help ourselves. In the long history of art, it’s what we’ve always done. Art can speak to us and generate meanings that the artist is oblivious to, whether that artist is a human, an AI, or an elephant with a paintbrush. With image-creation tools, anybody can create, with a short description, original images that are meaningful to them, and be able to afford to get them. I can speak from experience that doing so is a surprising, fascinating, incredibly interesting activity.

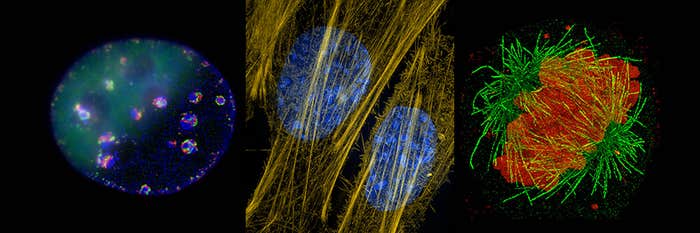

I frequently look at the images I’ve generated and saved, and really enjoy them. For many it’s the same enjoyment we get from looking at images from human artists. AI image programs allow us to get out of our daily minds and surprise us with our hidden capabilities to create new worlds. The creation of the kind of image you want usually involves multiple iterations, changing the prompt in response to what the tool gives you. It makes the everyday user an active participant in the image-generating process, becoming not an artist, perhaps, but an art director of their own, personal collection. ![]()

Jim Davies is a professor at the Department of Cognitive Science at Carleton University. He is the author of Riveted, which further explores issues raised in this essay. He is co-host of the award-winning podcast Minding the Brain. His latest book is Being the Person Your Dog Thinks You Are: The Science of a Better You.

Lead image: “Hairstrangle-Grunt-Wilder” by Midjourney, with prompt by Jim Davies