One question for Animesh Garg, a computer scientist at the University of Toronto, director of the People, AI, and Robotics group, and a senior research scientist at Nvidia whose research vision is to enable robots to seamlessly interact and collaborate with humans in novel environments.

Is Tesla’s new humanoid robot “Optimus” an AI gamechanger?

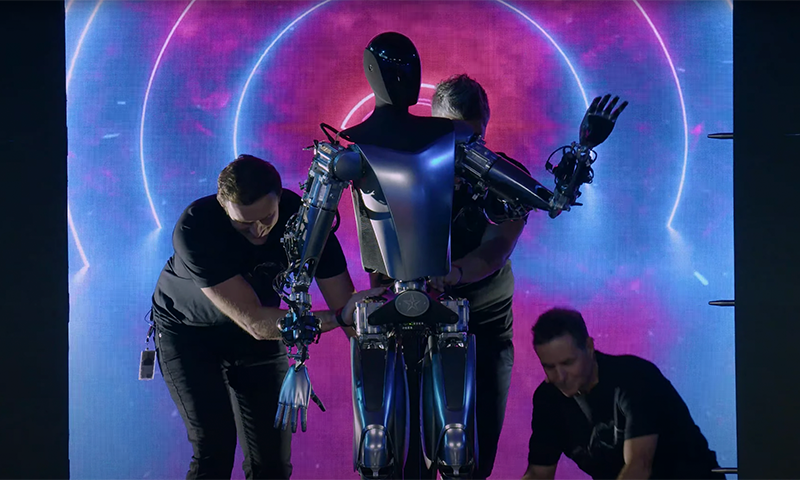

Overall, the current design of the robot, which Tesla unveiled on September 29, is a very good first step. Interest in building such systems is welcome because Tesla and Elon Musk's involvement brings attention, talent, and resources to the problem, setting in motion a flywheel of progress.

The goal of building humanoids is fascinating and divisive. A lot of academics, investors, and industry leaders would balk at the idea, arguing that simpler, non-anthropomorphic solutions, along with changes to the environment, are more practical. But this is akin to redoing the infrastructure to make autonomous cars work, instead of adapting autonomous cars to our current infrastructure.

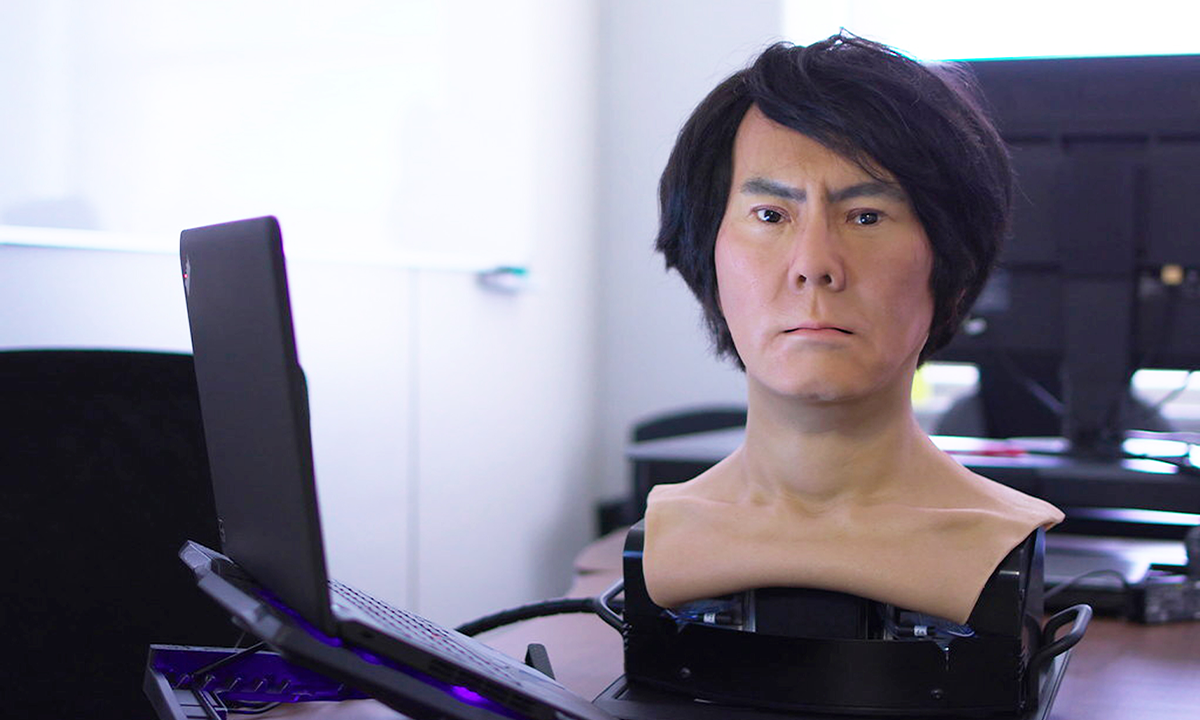

The problem of a smart robot design lies equally in building more capable robot hardware—sensors, actuators, and power systems—as it does in building smarter brains. Tesla’s Optimus effort is, in a sense, no different from Asimo, Atlas, T-HR3, or Digit. All of them are backed by expert roboticists. Some of them are from car companies like Honda and Toyota, which have taken their manufacturing background and years of design experience to build humanoid robots. However, what’s different is that the Tesla robotics team has done a commendable job in fast iteration time for the design of the robot from the ground up—from a good experimental study on actuator requirements, designing new actuators, and creating the integrated system. This would allow meaningful power while conserving energy, unlike hydraulic actuators used by Atlas, from Boston Dynamics.

The hand also seems exciting—a metallic cable-driven system with four fingers and a thumb. In the brief demo, they showed it had a reasonably high-loading capacity (holding plant-watering water cans, lifting bars of aluminum in the factory). However, due to the cable-driven design, the system will have a slower response time, and it will be harder to do learning-based control. There’s also no back drivability in autonomous mode, which will make general-purpose autonomous hand manipulation slightly challenging.

Tesla would benefit a lot from collaborating with the community. Designing the whole stack of hardware, simulation, and data infrastructure is requiring Tesla to reinvent the wheel on many fronts. They could release limited encrypted designs which would enable a community to integrate Tesla Bot as an experimental platform and build around it. Yet, the challenges of learning-based control and behavior chart a long road, with a long tail of problems. Autonomous driving offered a mirage of being “just around the corner,” and we are still chasing the dream over a decade later.

Overall, we should learn a lesson in humility about the challenges of building deployable autonomy in the real world. Especially since making the Tesla bot work could be considered a harder problem, because along with perception and agent modeling, it also needs carefully controlled interactions with the environment. Autonomous vehicle stacks use rendering and procedural-scene creation with no physics at all, since there is no contact-based interaction, while robotics is built for controlled contact in addition to many of the challenges of autonomous vehicles.

The flip side, though, is that robots, in the short term, have use cases in places where they do not necessarily need to interact with humans, and the implications of failure are less severe. The community needs to find a revenue-positive pathway to support this development. And this could come from behind-the-scenes use cases for robot manipulation in warehouses, retail stores, food prep, and manufacturing.

This effort should be lauded with cautious optimism by the community, for the compass points in the right direction, and Elon brings with him the heft of Tesla engineers as we trek through the AI/Robotics jungle. ![]()

Adapted with permission from Animesh Garg’s original remarks here.

Lead image: Tesla / Youtube