Vladimir Voevodsky had no sooner sat himself down at the sparkling table, set for a dinner party at the illustrious Institute for Advanced Study in Princeton, New Jersey, than he overturned his empty wine glass, flipping bowl over stem and standing the glass on its rim—a signal to waiters that he would not be imbibing. He is not always so abstemious, but Voevodsky, that fall of 2013, was in the midst of some serious work.

Founded in 1930, the Institute has been called “the earthly temple of mathematical and theoretical physics,” and is a hub for all manner of rigorous intellectual inquiry. Einstein’s old house is around the corner. In the parking lot a car sports a nerdy bumper sticker reading, “Don’t Believe Everything You Think”—which might very well be aimed directly at Voevodsky. Because during the course of some professional soul-searching over the last decade or so, he’d come to the realization that a mathematician’s work is 5 percent creative insight and 95 percent self-verification. And this was only reinforced by a recent discovery around the time of the dinner party: He’d made a big mistake.

Born in Moscow in 1966, Voevodsky developed an interest in mathematics largely on his own—initially because he wanted to understand physics, later because he unexpectedly fell in love with abstract algebra. Disliking the formalities of academia and neglecting to attend classes, he was “rusticated,” as he puts it, from Moscow State University. After the fall of Communism in 1989, it didn’t matter so much that he never technically completed his undergraduate mathematics degree. He established his credentials working and publishing with his betters, namely the mathematicians Yuri Shabat and Misha Kapranov. He and Kapranov shared a passion for developing the mathematics of new higher dimensional objects and categories, and they published an important result in 1990.

On the weight of Kapranov’s recommendation, Voevodsky found himself accepted for graduate studies at Harvard, without even having applied. His 1992 doctoral thesis marked the beginning of a line of ideas in algebraic geometry that 10 years later earned him the Fields Medal, the so-called Nobel of mathematics. And, the year before, in 2001, by the young age of 36, he’d become a full professor at the Institute, the most esteemed of intellectual sanctuaries (after being a long-term member since 1998).

But along the way he met a bump in the road. In 1998, the American mathematician Carlos Simpson published a paper indicating there might be a mistake in Voevodsky and Kapranov’s 1990 result. For years Voevodsky sifted through the details without making much progress. He remained convinced the result was right. Then, in the autumn of 2013, as the leaves changed color and summer gave way to autumn, he made a breakthrough. Of sorts. He confirmed the error. The important result was no longer quite so important.

“It is plainly wrong. The main theorem is incorrect,” he says. “It’s not that there is some gap in the proof. It’s that the main theorem is plainly wrong.” The mistake, he explains, was in failing to question the obvious. “We had proved that an assertion was indeed true in all of the difficult cases, but it turned out to be false in the simple case. We never bothered to check.” In confirming the error, he added an addendum to the original citation in his official publications list—“Warning: The main theorem of this paper was shown by Carlos Simpson to be false.”

But even in clearing up the matter and making amends, the broader implications left him unsettled. The error was confirmed a quarter century after it was committed. And this was the second time an error had been found in his published work. There’d been another error in a paper that was widely read and discussed and repeatedly applied in an ever-evolving field. Luckily, it was a smaller error, easily routed. Voevodsky corrected it by constructing a different route to all the same interesting and important theorems.1

For most people, the computer is only a tool, like a vacuum cleaner. For me, it’s like a colleague.

Not a bad margin, two errors in about 60 papers. Nonetheless, he found it troubling. It clearly was not an accident, as Voevodsky himself is quick to note, in a talk about the predicament he’s given at least a dozen times in the intervening years—he is determined to communicate his concern. It was a complicated paper. And readers of such papers essentially get lazy. “A technical argument by a trusted author, which is hard to check and looks similar to arguments known to be correct, is hardly ever checked in detail.”

What were the implications for mathematics on the grand scale?

“I got very worried,” he says. Worried, and scared. Since around the same time that he confirmed the error, he’d also been working on something new and exciting—something he called “2-theories,” similar to the higher-dimensional mathematics he and Kapranov had been exploring. It was technically complex and innovative. But his passion for pursuing it evaporated because there was no way of knowing that he might not make a mistake. And, as he’d just learned, no one was likely to be checking up with any diligence. These worries killed his creativity. “I stopped doing curiosity driven research. Because the areas where my curiosity led me, areas of value and interest and beauty, I didn’t have the tools to explore.”

Not with any certainty, anyway. According to Voevodsky’s own insight-to-verification ratio, for every hour he spent on an idea he’d need to spend 19 hours ensuring it was correct. Or more. “The more original the insight,” he says, “the more one has to pay for it later in self-verification.” Too steep a price.

Voevodsky decided that the only way he could proceed would be to employ a “proof assistant”—a computer program that would prevent him from wandering too far down errant paths. He had already been investigating the prospects of a computer-assisted formalization of mathematics, since 2003. The error, he says, “was just like a little present for me, to make my argument stronger.”

The experience of working with a proof assistant, as he describes it, is a bit like playing a video game. He’s jousting with the computer. He instructs it to try this, try that—he can even instruct it to try something known to be wrong, just to see what happens. “It’s very interactive,” he says. “It can be fun and exciting, and kind of addicting.” When he makes a mistake, all the assertions he’s typed in, lines and lines and lines, vanish before his very eyes (though he saves the work in a file called “leftovers”).

This way, once Voevodsky has proved something, he knows for certain it’s true. He doesn’t wonder if there are any mistakes lurking within arguments, and he doesn’t worry about how to convince his colleagues. The proof assistant provides a stamp of authenticity.

Voevodsky is considered a pioneer in this arena, but the notion of proof assistants has been around for a while. The first, “Automath,” dates to 1968. Over time a vast ecosystem of proof assistants evolved, with a myriad of setups whereby the computer is used as a tool that mimics how traditional mathematics is done. The computer is essentially taught how humans do mathematics, programmed with axioms and asked to deduce proofs using the laws of logic.

Another approach is to deploy computers in pursuit of discovering altogether new mathematics, new approaches and procedures. For instance, Rutgers mathematician Doron Zeilberger has cited his co-author Shalosh B. Ekhad, the name he gave to his various computers along the way, with numerous papers he’s published since the 1980s. “For most people,” Zeilberger says, “the computer is only a tool, like a vacuum cleaner. For me, it’s like a colleague.” He hopes the effect will be nothing short of revolutionary. “Traditional mathematics is based on the notion of rigorous formal proof, and I think this is going to be obsolete,” he says. “I think since computers are so powerful, they open up new vistas, and the old agenda of proving everything so rigorously is not as exciting as it was before.”

At the actual moment of discovery, you actually stop thinking. Like an artist, you connect to something that lies far beyond logic, thinking, and computation.

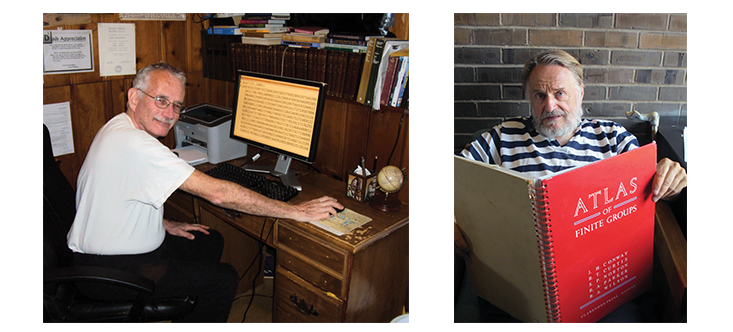

And then there are the purists, the old guard. “I’m not interested in a proof by computer,” says Princeton mathematician John Horton Conway. “I prefer to think.” He notes the irony that his official title is the John von Neumann Professor in Applied and Computational Mathematics, sponsored by IBM—“I sit and compute all day, but I don’t use a computer to do it.”

Conway is best known for discovering the Conway groups in mathematical symmetry, the aptly named surreal numbers, and the cellular automata Game of Life. He has a penchant for recreational mathematics and he was keen on the four color map theorem, but when in 1976 it became the first theorem proved by computer—at the behest of mathematicians Kenneth Appel and Wolfgang Haken and hailed in The New York Times as “a major intellectual feat”—he wasn’t as interested in the result as he might have been otherwise. He was fond, too, of tinkering with the Kepler conjecture about the maximum density for packing spheres in three-dimensional space, but when Thomas Hales solved it in 1998 using linear programming, Conway was left dissatisfied. For Conway, computers kill the buzz. Though he isn’t one to judge. He doesn’t mind if others use computers. “It just isn’t me.”

Historically, the purists have not always regarded the issue with such equanimity. The computer proof of the four-color conjecture caused controversy and most mathematicians refused to accept it: Computers + math = verboten. And as the Berkeley professor Edward Frenkel told The New York Times upon Appel’s death in 2013, “Like a landmark Supreme Court case, the proof ’s legacy is still felt and hotly debated.”

Broadly speaking, the argument against the use of computers is a lament for the loss of the human element: intuition and understanding. Acknowledging something as true because the computer says so is not the same as knowing why it is true. One might reckon it’s analogous to relying on an Internet mash-up of reviews about the mysteries of Venice, rather than going there and splurging on the water taxi and experiencing the magic for oneself. But then again, the same conundrum arises in building upon previous results rather than working from scratch.

“Computers are good—and generally much better than humans—at what they are designed to do,” says Frenkel, author of Love and Math. “And that is computation … But we must realize that mathematical research is not about numbers or calculations. It is about finding connections between things that are seemingly disconnected, it’s about seeing the invisible. That can not be achieved by computation alone. As a professional mathematician, I can tell you that mathematical discoveries come to us as insights. At the actual moment of discovery, you actually stop thinking. Like an artist, you connect to something that lies far beyond logic, thinking, and computation. And that’s the beauty of math.”

Voevodsky himself is careful to distinguish the various ways computers should or shouldn’t be put to use. “Lots of people don’t understand the difference between using computers for calculation, for computer-generated proofs, and for computer-assisted proofs,” he says. “The computer-generated proofs are the proofs which teach us very little. And there is a correct perception that if we go toward computer-generated proofs then we lose all the good that there is in mathematics—mathematics as a spiritual discipline, mathematics as something which helps to form a pure mind.”

The broader culture and practice of how errors occur is an even larger roadblock threatening the foundation and future of mathematics.

But very gradually, he says, the use of computers in mathematics is coming to be accepted as inevitable. And with respect to calculation and computation, especially among up-and-coming generations, it is already quite pervasive, if under-acknowledged. Frenkel agrees, saying that the use of computers to verify proofs is both an expected and welcome trend. As the towering mathematical enterprise reaches new heights, with more intricately and esoterically turreted peaks, each practitioner drilling down into minutiae that could never be understood by colleagues even in the same field, computers will be necessary for advancing the enterprise, and authenticating the integrity of the structure—a structure so complex in detail and so massive in scale that adjudication by mere human referee will no longer be feasible.

Voevodsky goes even further, arguing that the role of proof assistants—and the use of computers in general—extends beyond the isolated practical instances of avoiding errors. Because the broader culture and practice of how errors occur is an even larger roadblock threatening the foundation and future of mathematics. Error can be symptomatic of a certain disregard for what is really involved in proving something properly. Error, he says, can motivate “the direct dishonesty of claiming to have proved something without actually having done it. That’s a big force in mathematics today.”

“Nobody intentionally does something less well than one could,” he reckons. But if a mathematician must choose between publishing sooner without working through all the nuances of a proof, or publishing later and allowing a competitor to get ahead, the temptation is to glide over the details and sacrifice the quality of the proof and publish now. “And then if you read somebody else’s paper and there are even fewer details, you think, ‘Well if that’s what’s normal today then I’ll just be normal and next time put even fewer details.’ [Then] your student looks at your proofs and learns that this is how things are done, and then the student goes around and thinks completely honestly that he is doing the right thing.” It’s a gradual slippage—a “dissolution of standards.” Proof assistants nudge mathematicians in the other direction.

Besides, errors are rarely, if ever, enlightening. Even Conway, who likes to show off the odd error here and there in allowing others to witness his imperfections, protests that he has never encountered an error that was in any shape or form advantageous. And Voevodsky dismisses the notion that taking an errant mathematical turn might be like getting lost in New York City and stumbling upon a magnificent hidden garden. “Well, first of all you’d have to be in Manhattan, or you might find something not so desirable.”

So these days, Voevodsky goes back and forth between his proof assistant, rigorously laying down definitions and theorems line by line by line, and his more traditional efforts with pencil and paper, drawing diagrams and sketching out ideas. “Working with a proof assistant reminded me what a real proof is,” he says. “It grounded me back into being able to provide more detailed proofs when I write mathematics by hand.” Whenever he is tempted to take a shortcut—to say something like, The proof of this is similar to the proof of that or This is straightforward … or This is obvious …—he is forced to ask himself, Would I be able to get away with such shaky reasoning in the company of my trusty proof assistant?

“Obviously not.”

Siobhan Roberts is a Toronto-based writer. Her new book, Genius At Play: The Curious Mind of John Horton Conway, will be published this July by Bloomsbury.