John Ruskin called it the pathetic fallacy: to see rainstorms as passionate, drizzles as sad, and melting streams as innocent. After all, the intuition went, nature has no human passions.

Imagine Ruskin’s surprise, then, were he to learn that the mathematics of perception, knowledge, and experience lie at the heart of modern theories of the natural world. Quite contrary to his stern intuition, quantitative relationships appear to tie hard, material laws to soft qualities of mind and belief.

The story of that discovery begins with the physicist Ludwig Boltzmann, soon after Ruskin coined his phrase at the end of the 19th century. It was then that science first strived, not for knowledge, but for its opposite: for a theory of how we might ignore the messy details of, say, a steam engine or chemical reaction, but still predict and explain how it worked.

Boltzmann provided a unifying framework for how to do this nearly singlehandedly before his death by suicide in 1906. What he saw, if dimly, is that thermodynamics is a story not about the physical world, but about what happens when our knowledge of it fails. Quite literally: A student of thermodynamics today can translate the physical setup of a steam engine or chemical reaction into a statement about inference in the face of ignorance. Once she solves that (often simpler) problem, she can translate back into statements about thermometers and pressure gauges.

The ignorance that Boltzmann relied upon was maximal: Whatever could happen, must happen, and no hidden order could remain. Even in the simple world of pistons and gases, however, that assumption can fail. Push a piston extremely slowly, and Boltzmann’s method works well. But slam it inward, and the rules change. Vortices and whirlpools appear, streams and counter-streams, the piston stutters and may even stall. Jam the piston and much of your effort will be for nothing. Your work will be dissipated in the useless creation and destruction of superfluous patterns.

How that law works itself out—how waste occurs in the real world, beyond the ideal—was unavailable to Boltzmann. The thermodynamics of the 19th century needed to wait for equilibrium to return, for all of these evanescent and improbable structures to fade away. Our ignorance in the equilibrium case is absolute: We know that there is nothing more to know. But in a world out of equilibrium we know there is something more to know, but we do not know it.

Non-equilibrium thermodynamics was unexplored territory for many years. Only in 1951, 45 years after Boltzmann’s death, were we able describe how small adjustments that kick a system ever so slightly out of equilibrium vanish in time, through something called the Fluctuation Dissipation Theorem. Compared to quantum mechanics or relativity, neither of which were subjects until the time of Boltzmann’s death, but which threw up surprise after surprise for decades, thermodynamics appeared to operate on glacial scales.

By the opening of the 21st century, however, a science based on a theory of inference and prediction had entered a renaissance, driven in part by rapid progress in machine learning and artificial intelligence. My crash course in the new thermodynamics came from a lecture by Susanne Still of the University of Hawaii in 2011. It was there that Still announced, with her collaborators, a new relationship between the dissipation in a system (the amount of work we do on it that is wasted and lost) and what we know about that system.1

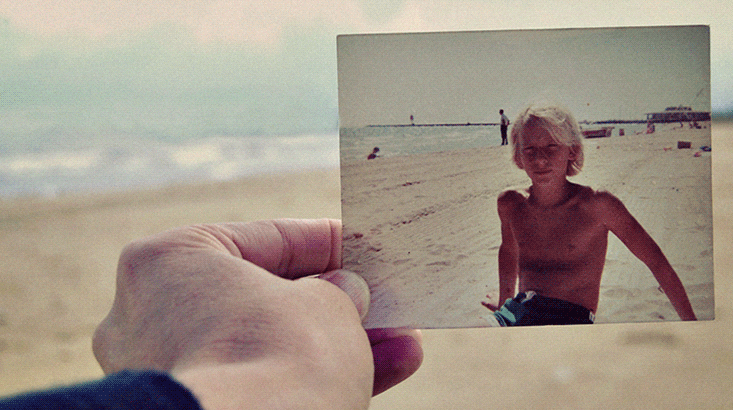

Here is the melancholy of a forgotten memory, a childhood room packed into boxes, appearing quite literally in physical law.

Still and her collaborators showed how dissipation is bounded by the unnecessary information a system retains about the world—information irrelevant to the system’s future. With an admirable poetry of mind, they referred to this as nostalgia, the memories of the past that are useless for the future. What they showed is that the nostalgia of a system puts a minimum on the amount of work you will lose in acting on it.

Quite literally, work: Whirlpools set up when you jam down a piston are not (yet) lost—with careful tracing, one can jitter the piston back to recover their energy. It is only over time, as they break apart and become unknowable, as they become increasingly unpredictable to you, that the work that went into their creation is lost.

Here is the melancholy of a forgotten memory, a childhood room packed into boxes, the irrecoverable details of an afternoon drizzle, appearing quite literally in physical law. Those memories trace a past that no longer matters. Brought to our attention, they tell us a story of loss, and threaten to consume our present, if we’re not careful. In physics, too, nostalgia carries a penalty.

We can invert this result: Dissipation is connected to how hard it is to recover what a system used to look like. In order to rewind, one needs to retrodict, to predict backward—and complete retrodiction is impossible when nostalgia means that many different pasts are compatible with the same future. The exact mathematical relations between nostalgia, irreversibility, and dissipation are elaborate, specific, and always a bit of a surprise when they fall out of the equations.

One of the more remarkable contributions to the new thermodynamics in recent years has been by Jeremy England of the Massachusetts Institute of Technology, released the year after Still’s group.2 Focusing on the irreversibility of a system, rather than its nostalgia, England put together an account of the biological world. He described the ways in which evolution might drive organisms not only to make use of the free energy in their environments, but to do so in a maximally dissipative fashion.

England’s work seems to explain why, over 3 billion years, our ecosystem turned into a giant green solar panel, feeding towers of herbivores and predators as part of a natural process that smears out the energy of the sun. We exist, in this interpretation, because we dissipate as reliably as possible the massive source of work at the center of our solar system.

While Still’s work connects nostalgia to dissipation and loss, England’s work seems to say that life itself is brought into being by the demands of dissipation. Beings like us exist precisely because we create our worlds—physical, chemical, biological, mental, social—and tear them down faster than the alternatives. Nostalgia may be bittersweet, but it may also underwrite our existence.

Simon DeDeo is a professor in the School of Informatics and Computing at Indiana University and external faculty at the Santa Fe Institute.

Acknowledgements

I thank Matteo Smerlak for extensive conversations, and Susanne Still, Jeremy England, and Jascha Sohl-Dickstein for lectures held at the Santa Fe Institute and Arizona State University’s BEYOND Center. This work was written in part during a stay at the Perimeter Institute for Theoretical Physics, supported by the Government of Canada through Industry Canada and by the Province of Ontario thorough the Ministry of Economic Development & Innovation.

References

1. Still, S., Sivak, D.A., Bell, A.J., & Crooks, G.E. The thermodynamics of prediction. Physical Review Letters 109, 120604 (2012).

2. England, J.L. Statistical physics of self-replication. The Journal of Chemical Physics 139, 121923 (2013).