When the Eastern Black Swallowtail caterpillar is disturbed by a would-be predator—a bird or insect—it stirs into motion, briefly darting out a pair of fleshy, smelly horns. To humans, these horns might appear yellow—a color known to attract birds and many insects—but from a predator’s-eye-view, they appear a livid, almost neon violet, a color of warning and poison for some birds and insects.

“It’s like a jump scare,” says Daniel Hanley, an assistant professor of biology at George Mason University. “Startle them enough, and all you need is a second to get away.”

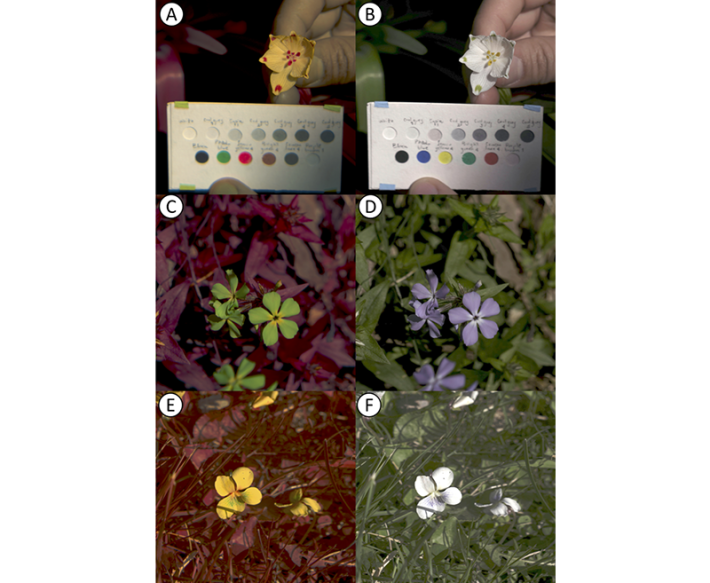

Hanley is part of a team that has developed a new technique to depict on video how the natural world looks to non-human species. The method is meant to capture how animals use color in unique—and often fleeting—behaviors like the caterpillar’s anti-predator display.

Most animals, including birds and insects, possess their own ways of seeing, shaped by the light receptors in their eyes. Human retinas, for example, are sensitive to three wavelengths of light—blue, green, and red—which enables us to see approximately 1 million different hues in our environment. By contrast, many mammals, including dogs, cats, and cows, sense only two wavelengths. But birds, fish, amphibians, and some insects and reptiles typically can sense four—including ultraviolet light. Their worlds are drenched in a kaleidoscope of color—they can often see 100 times as many shades as humans do.

Hanley’s team, which includes not just biologists but multiple mathematicians, a physicist, an engineer, and a filmmaker, claims that their method can translate the colors and gradations of light perceived by hundreds of animals to a range of frequencies that human eyes can comprehend with an accuracy of roughly 90 percent. That is, they can simulate the way a scene in a natural environment might look to a particular species of animal, what shifting shapes and objects might stand out most. The team uses commercially available cameras to record video in four color channels—blue, green, red, and ultraviolet—and then applies open source software to translate the picture according to the mix of light receptor sensitivities a given animal may have.

Birds, fish, and amphibians can often see 100 times as many shades of color as humans do.

Previous methods of simulating animal vision required a laborious process that involved the use of a spectrometer, a bulky piece of lab equipment that provides a wavelength-by-wavelength estimation of reflected light. Researchers would take a series of photographs of the same object, through four filters, and overlay the resulting photographs—but only stationary objects could be captured, not dynamic moving ones. “You simply cannot swap out a filter while an animal is moving and then have comparable photographs at another wavelength range,” says Hanley.

There are some caveats, of course. First, the animal-view images are presented in “false colors”—a kind of human-eye interpretation of what animals see, given that our range of vision doesn’t run to ultraviolet or polarized light. “So this is just an abstraction, to help us understand what they might be experiencing,” Hanley says. Second, on a philosophical level, the pictures do not really capture what the animals are seeing, only what their photoreceptors are detecting.

“We're giving you the outputs of what we think is happening at the photoreceptor,” explains Hanley. “But what the animal does with that information in its brain is different.”

The cameras they use can’t capture especially swift behaviors from an animal-eye view, such as perhaps a cobra strike; nor are they great for some lower-light environments, such as the floor of a dense rainforest. They also miss certain dimensions of specific animals’ vision, such as the magnetic fields detected by some birds, or visual acuity, which can vary wildly among avian species. While birds of prey have oversized eyes that help them see and corner tiny creatures scurrying at a distance, songbirds are “what we would deem borderline blind,” says Hanley. “Everything would be blurry for them.”

Nonetheless, the ability to simulate an approximation of the colors an animal would see holds a great deal of promise for researchers, Hanley and his colleagues say. It could help scientists capture and analyze many animal behaviors, such as the ways in which they use color or light to draw attention, ward off attention, locate food, or communicate with fellow creatures, from potential predators to possible mates.

The researchers also think their technology could be used for conservation purposes. It could, for example, help reduce avian window strikes, through analysis of the positioning of ultraviolet light stickers—clear to us, and black to birds—against glass.

The short videos the scientists create could help them see things they might have otherwise missed, and illuminate some dark corners of the natural world. ![]()

Lead image from Vasas, V., et al. Recording animal-view videos of the natural world using a novel camera system and software package PLOS Biology (2024).

*An earlier version of this article stated that the Eastern Black Swallowtail caterpillar wraps itself in a leaf when preparing to become a butterfly. It does not. We have corrected the error.

Prefer to listen?