Michael Levin, a developmental biologist at Tufts University, has a knack for taking an unassuming organism and showing it’s capable of the darnedest things. He and his team once extracted skin cells from a frog embryo and cultivated them on their own. With no other cell types around, they were not “bullied,” as he put it, into forming skin tissue. Instead, they reassembled into a new organism of sorts, a “xenobot,” a coinage based on the Latin name of the frog species, Xenopus laevis. It zipped around like a paramecium in pond water. Sometimes it swept up loose skin cells and piled them until they formed their own xenobot—a type of self-replication. For Levin, it demonstrated how all living things have latent abilities. Having evolved to do one thing, they might do something completely different under the right circumstances.

Slime mold grows differently depending on the music playing.

Not long ago I met Levin at a workshop on science, technology, and Buddhism in Kathmandu. He hates flying but said this event was worth it. Even without the backdrop of the Himalayas, his scientific talk was one of the most captivating I’ve ever heard. Every slide introduced some bizarre new experiment. Butterflies retain memories from when they were caterpillars, even though their brains turned to mush in the chrysalis. Cut off the head and tail of a planarian, or flatworm, and it can grow two new heads; if you amputate again, the worm will regrow both heads. Levin argues the worm stores the new shape in its body as an electrical pattern. In fact, he thinks electrical signaling is pervasive in nature; it is not limited to neurons. Recently, Levin and colleagues found that some diseases might be cured by retraining the gene and protein networks as one might train a neural network. But when I sat down to talk to the audacious biologist on the hotel patio, I mostly wanted to hear about slime mold.

How did you come to cut up slime mold?

I was doing a home-school unit with my oldest son, and we wanted to understand what the slime mold Physarum does. It’s growing in a Petri dish of agar. It behaves by changing its shape. It crawls this way or that way. Also, it’s unicellular; the whole thing is one cell. No matter how big it is—it could be meters across—it’s one cell.

You’ve got a little treat, which is an oat flake, and it starts to grow toward the oat flake. Then you can take a razor blade and cut off the leading edge. After, you have two individuals: You have the big one, and you get the small one. Now, the small one has a choice to make. It can go get the food first, or it can go rejoin the rest of the Physarum and then go get the food. If it gets the food, it doesn’t have to share the food with this giant mass behind it. The nutrient density of that reward is huge.

With any kind of behaving organism, there is a calculus of payoff: If I do this, I win this much, and if I do that, I win that much. You have to make decisions. It’s always a trade-off of what are you going to do. People study this with game theory and things like the Prisoners’ Dilemma. You and I are competing and potentially cooperating over some resources. The number of players is constant. But the Physarum scenario is a kind of Prisoners’ Dilemma game where the number of players isn’t constant. Not only can you cooperate or defect, but you could also split and merge. How do you think about making decisions when the borders of your self are not fixed?

So what happened?

It preferred to first rejoin the rest.

The smaller individual that’s been created by the razor cut is faced with the choice, “I can take this food and keep it to myself” or “I cease to exist.” Why would there be any alternative for that creature? Why would it want to reassimilate into the collective?

Because existing as a separate individual may not be the highest payoff. Once you rejoin the rest of the group, you lose the ability to be an independent individual, but what you gain is greater stability as part of a whole, because you’re less likely to dry out, for example.

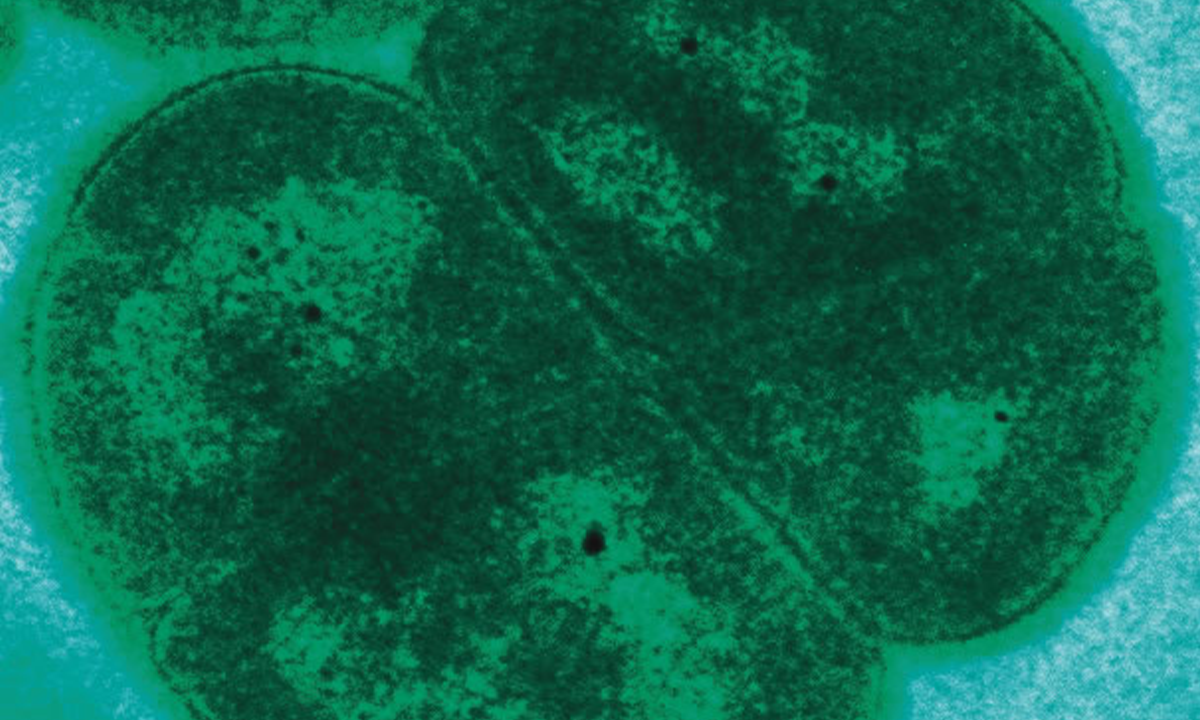

Are the cells of our body continually measuring the payoff of cooperating vs. defecting, too?

Yes, but if you are a cell that’s connected strongly to its neighbors, you are not able to have these kinds of computations. “Well, what if I go off on my own? I could just leave this tissue. I could go somewhere else where life is better. I could set up my own little tumor.” You can’t have those thoughts because you are so tightly wired into the rest of the network. You can’t say, “Well, I’m going to ….” There is no I; there’s we. You can only have those thoughts, “What am I going to get?” when you’re not part of the group.

But as soon as there’s carcinogen exposure or maybe an oncogene that gets expressed, the electrical connection starts to weaken. It’s a feedback loop, because the more you have those thoughts, the more you’re like, “Well, maybe let me just turn that connection down a little bit. Now I’m really coming into my own. Now I’m out of here. I’m metastasizing.”

So a carcinogen would work in this case by disrupting the bioelectrical connections.

Exactly. What we’ve done in the frog system, and we’re now moving into human cells, is to show that [electrical weakening] happens, and that you can prevent it and prevent normalized tumors by artificially forcing the electrical connection. We can shoot up a frog with strong human oncogenes and then show that, even though those are blazingly strongly expressed, there’s no tumor because you’ve intervened. You’ve forced the cells to be in electrical communication despite what the oncogene is trying to get it to do.

One slide you skipped over in your talk was, “Why don’t robots get cancer?” I’m intrigued!

The reason cancer’s not a problem in today’s robotics is because we build with dumb parts. The robot may or may not be intelligent to some degree, but at the next level down, all the parts are passive; they don’t have any goals of their own. So, there’s never a chance that they’re going to defect. Your keyboard’s never going to wander off and try to have its own life as a keyboard. Whereas in biology, it’s a multi-scale architecture where every level has goals, and there’s pros and cons to this. The pros are you get these amazing things we’re talking about now. The con is that sometimes you get defections as a failure mode, and you get cancer.

Said one cell to another, “I’m out of here. I’m metastasizing.”

To go back to the slime mold, how does it decide?

We know it has memory. It can learn from experience. We know it does make all kinds of decisions if you give it various options of things it can do. The whole thing is a hydraulic computer. We injected little fluorescent beads into the thing. There are flows through the cytoplasm. And the cool thing is that if you have a fork like a “Y,” you see that it just shuts off one branch off, and the stream only goes the other way. It has selective control over each branch point—it’s a synapse, basically.

Almost like a little transistor.

Exactly right. Once you have that, you can make logic gates, and you can make anything. Now you can do computations.

Another thing is it has these vibrations. It’s constantly tugging on the surface. We have this wild paper where we put one glass disc here, three glass discs back here. There’s no chemicals, there’s no food, no gradients. It’ll cogitate for about four hours, just kind of sitting here doing nothing. And then boom!—it grows out toward the three.

What it’s doing is sensing strain in the medium. It’s pulling, and it feels the vibrations that come back. It’s ridiculously sensitive because each disc is like 10 milligrams. For whatever bizarre reason, it prefers the heavier mass. During those four hours it collects the data, decides where it’s going, and then, boom!

If it’s sensitive to vibrations, does it react to music?

We grew the Physarum on a plate, and the plate was sitting on a speaker, and my student was driving the speaker with her iPhone. And we could see that for certain types of music, it would grow quite differently than for others. Some of them, it grew very nicely. Some of them, it just didn’t grow at all. It just really hated it; it just hunkered down.

What music in particular?

The thing is, she was 19 years old, so I didn’t recognize any of the artists that she was telling me about. But that’s a real easy home science project. Somebody could totally do that with their kids.

So many things can suffer that have nothing to do with a human brain.

What else have you discovered about Physarum psychology?

If you place a small piece of food nearby and then a much larger piece of food far away, it tends to go for the larger piece and bypasses the small one, which I thought was really weird, because why wouldn’t you grab it along the way? But it just sort of fixated on the big one and went for that. Maybe it thought it would come back later for the smaller.

Because it’s so slow and small, it can’t catch its prey; it grows probably a centimeter every couple hours. My postdoc and I had this idea: What if it has a way of slowing other things down to its level? She put some planaria [flatworms] on there, and she saw that the planaria basically stopped moving; they got anesthetized. They slowed down to the point where the Physarum could eat it. So we made an extract out of it and put it on frog embryos, and the frog embryos slowed down their development. They didn’t stop; they just really slowed down. So it probably is a real slowdown as opposed to some kind of anesthetic.

A big theme of your work has been that organisms have latent abilities—that the behavior we see in nature is contextual and that, by altering the circumstances, you coax them to do totally different things. What are some examples?

We are lulled into thinking that frog eggs always make frogs, and acorns always make oak trees. But the reality is that once you start messing around with their bioelectrical software, we can make tadpoles that look like other species of frogs. We can make planaria that look like other species of flatworms across 150 million years of evolutionary distance—no genetic change needed, same exact hardware. The same hardware can have multiple different software modes.

You can look at frog skin cells and say, “All they know how to do is how to be this protective layer around the outside. What else would they know how to do?” But it turns out that if you just remove the other cells that are forcing them to do that, you find out what they really want to do—which is to make a xenobot and have this really exciting life zipping around and doing kinematic self-replication. They have all these capacities that you don’t normally see. There’s so much there that we haven’t even begun to scratch the surface of.

Some object to speaking of what the cells “know” or “want” to do. Do you think that a concern about being anthropomorphic or anthropocentric has hindered research in this?

Incredibly so. I love to make up the words for this stuff because I think they need to exist—“teleophobia.’’ People go screaming when you say, “Well, it wants to do this.” People are very binary because they’re still carrying this pre-scientific holdover. Back in before-science times, you could be smart like humans and angels, or you could be dumb like everything else. That was fair enough for our first pass in 1700, but now we can do better. You don’t need to be at either of these endpoints. You could be somewhere in the middle. When I say this thing “wants to do XYZ,” I’m not saying it can write poetry about its dreams. It doesn’t necessarily have that kind of second-order metacognition; it doesn’t know what it wants. But it still wants.

Do you think there are agents, maybe we’ve encountered them already, that we’re not recognizing or couldn’t recognize as such?

All over the place. We’re made of them. Charles Abramson and I wrote this primer for figuring out how intelligent any system is. Could it habituate? Could it be sensitized? Can it do associative learning? Can it do fear conditioning? I’m sure once we start looking, we’re going to find degrees of agency all over the place.

Given that we could conceive of, say, AI systems or tornadoes as agents, how should we respond? Does our response have ethical implications?

I absolutely think it has massive ethical implications. Everybody who doesn’t read a lot of sci-fi is so behind on this. There are so many things out there that can suffer that have nothing to do with a human brain. I do think that you could formulate something like the new Golden Rule: “Be nice to goal-seeking systems in some sort of proportion to their capacity to do it.” I don’t think we want to get to the point where we are afraid to roll bowling balls down an inclined plane because we don’t want to hurt their feelings. People who worry about various kinds of AI today, never mind tomorrow’s, aren’t crazy at all. Humanity has a long history of moral lapses toward creatures that don’t look enough like them. ![]()

George Musser is an award-winning science writer and the author of the forthcoming book Putting Ourselves Back in the Equation, as well as Spooky Action at a Distance and The Complete Idiot’s Guide to String Theory. Follow him at @gmusser@mastodon.social and @gmusser.bsky.social.

Lead photo: Michael Levin on a hike in the Kathmandu Valley. Photo by George Musser.