“Literature is the opposite of data,” wrote novelist Stephen Marche in the Los Angeles Times Review of Books in October 2012. He cited his favorite line from Shakespeare’s Macbeth: “Light thickens, and the crows make wing to the rooky wood.” Marche went on to ask, “What is the difference between a crow and a rook? Nothing. What does it mean that light thickens? Who knows?” Although the words work, they make no sense as pure data, according to Marche.

There are many who would disagree with him. With the rise of digital technologies, the paramount role of human intuition and interpretation in humanistic knowledge is being challenged as never before, and the scientific method is tiptoeing into the English department. Some humanists are eagerly adopting these new tools, while others find them problematic. The rapid ascent of the digital humanities is spurring pitched debate over what it means for the profession, and whether the attempt to quantify something as elusive as human intuition is simply misguided.

Today, huge amounts of the world’s literature have been digitized and are accessible to scholars with the click of a mouse. Simple embellishments on keyword search can yield fascinating insights on this data. Take, for example, Google’s N-gram server, which debuted with a splash in 2011. The server allows you to track the frequency of words or word combinations (“bigrams,” “trigrams,” or “N-grams”) in the Google Books database over time. You can see, for instance, how words change meaning. Until 1965, “black” was just a color, occurring about as often as “red” and quite a bit less often than “white.” But between 1965 and 1970, the word “black” suddenly took on a new meaning, and its frequency leaped across the gap between “red” and “white.” The N-gram frequency graph is seductive; looking at it, you feel as if history has been captured on an x-y plot.

What is the difference between a crow and a rook? Nothing.

For the digital humanities, N-grams are like a “gateway drug,” says Matthew Jockers, an English professor at the University of Nebraska. The really potent stuff is topic modeling. A keyword search can turn up a mention of a “bond” in a book, but it can’t tell you whether the bond in question is a financial instrument, a chemical structure, or a means of restraining prisoners. All these meanings are mixed up in the ambiguity of human language, which comes naturally to us but is an impenetrable code to the computer.

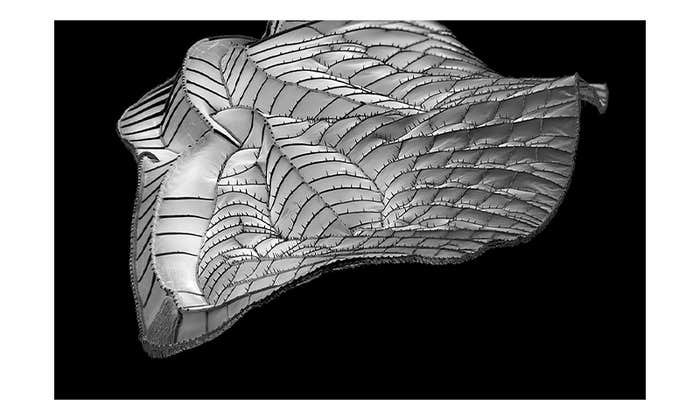

Topic modeling looks beyond the words to the context in which they are used. It can infer what topics are discussed in each book, revealing patterns in a body of literature that no human scholar could ever spot. Topic-modeling algorithms allow us to view literature as if through a telescope, scanning vast swaths of text and searching for constellations of meaning—“distant reading,” a term coined by Franco Moretti of Stanford University. Already, the approach has been applied to a wide range of subjects, such as the Irish view of American slavery in the 19th century, the role of women and black people in early American society, and even the attitudes of teenagers who post on a social messaging service.

Topic modeling overcomes a fundamental limitation of N-grams: You don’t know the context in which the word appeared. Which documents used “black” to mean a color, and which ones used it to refer to a race? N-grams cannot tell you. Thus it’s difficult to interpret what a sudden change in frequency of a word or a phrase might mean, unless you already know. A topic-modeling algorithm infers, for each word in a document, what topic that word refers to. Automatically, without human intervention, it makes the call as to whether “black” refers to a race or a color. In theory, at least, it reaches beyond the word to capture the meaning.

When applied to bodies of text, topic modeling produces “bags” of words that belong together, such as “negro slave dat plantation dis overseer mulatto…” or “species global climate co2 water….” The digital humanities researcher would then interpret that these two bags refer to American slavery and climate change, respectively. Each bag corresponds to a topic.

For digital humanists, this approach opens up a world of possibilities. “We’ve often written about the way that Irish people sympathized with the plight of slaves in America in the 1800s,” Jockers says. “Previously, we would have been sitting in my office trading books, saying, ‘Here’s an Irish book addressing slavery, isn’t that interesting.’ ” Now, he says, he can tell you which 250 books deal with slavery. Topic modeling also lets you mine for new themes and topics. Jockers uncovered the steady rise of the “afternoon tea” ritual in the 1800s by applying topic modeling to 19th-century English novels, an exercise that turned up topic groups like “afternoon luncheon morning drawing-room course to-day visitors tea…” On occasion, the computer has even outperformed its human user. When MIT graduate student Karthik Dinakar used topic modeling to study social media posts by teenagers, the computer correctly decoded a post saying “She’s forcing me to give up the goods” as being about sexual intercourse—a bit of American slang that Dinakar, who grew up in India, missed.

Topic-modeling algorithms allow us to view literature as if through a telescope, scanning vast swaths of text and searching for constellations of meaning.

Topic-modeling algorithms can also turn up unexpected connections between themes. For example, Sharon Block, a historian at the University of California at Irvine, found that the word “woman” and the word “Negroe” each appeared chiefly in just one topic in the archive of the Pennsylvania Gazette, an 18th century newspaper: “servant/slave.” Block’s finding demonstrates the marginalization of both blacks and women very concretely: To this predominantly mercantile newspaper (think the Wall Street Journal, minus two centuries), blacks and women existed only as property.

By building a bridge between the humanities and computer science, the digital humanities is changing each discipline. For computer scientists, trained in a binary world of yes/no, true/false, the bridge leads to a vague new world, with many shades of grey—a disorienting, but exciting, place. Humanists, by contrast, “have known there is no right answer for hundreds of years,” and they are comfortable with that, says David Blei, a computer scientist at Princeton and the co-inventor of latent Dirichlet allocation, the primary topic-modeling algorithm currently being used in the digital humanities. “Instead, they’re looking for perspective.”

On the other side of the bridge, topic modeling introduces quantitative arguments into the humanities, a pretty big deal in an area that many people chose to study because it was not quantitative. When Block topic-modeled half a million abstracts from historical journals to track the evolution of women’s history, readers of her paper couldn’t get past the graphs. “One reviewer said that the article was clearly written by a computer scientist who doesn’t understand our field,” she says. The journal that accepted her paper tried to limit her to, at most, three tables or graphs. “I asked them, did you read it? There’s no article without them,” she says.

Also relatively new to the humanities is something that has long been an indispensable element of the scientific method: falsifiability. Jockers believes the day is coming when humanists will test—and sometimes falsify—their hypotheses statistically. He has already done so himself. In his own book Macroanalysis, Jockers had argued, using a topic model, that writers focusing on political or religious themes were more likely than other writers to use a pseudonym. Jockers and David Mimno, a computer scientist at Cornell University, put this hypothesis to a statistical test, running the topic model many times to see if the discrepancy could be attributed to a chance variation. While many of Jockers’ other hypotheses held up, this one did not—it turned out that two outlying texts had skewed the results. “The smell of a smoking gun quickly dissipated,” Jockers wrote.

For computer scientists, trained in a binary world of yes/no, true/false, the bridge leads to a vague new world, with many shades of grey.

Perhaps surprisingly, Marche—the digital humanities skeptic—singles out this aspect of topic modeling for praise. “To have actual falsifiable questions in humanities… that’s magnificent,” he says. You can almost hear the “but” coming. “The spirit is wonderful, but they aren’t ready to deal with the hard questions,” he says. “What is a falsifiable question you can ask about Keats’ ‘Ode to a Nightingale?’ ”

This is perhaps the most frequently heard refrain in the criticisms of digital humanities: Where’s the beef? Where are the great insights?

Supporters argue that the digital humanities have produced new insights, but that the constellations of meaning it generates are not the kinds of insights humanists are used to. For example, when Ted Underwood, an English professor at the University of Illinois, topic-modeled 4,275 books written between 1700 and 1900, he noticed that changes in literature happen more gradually than we give them credit for.

For the first hundred years of that period, for example, the proportion of “old” Anglo-Saxon words in use declined. But over the century that followed, literature trifurcated. In poetry, the use of “old” words increased markedly. In fiction, “old” words also became more popular, but less dramatically. In nonfiction, however, the frequency of “old” words remained unchanged from the previous century. The data reflected a complex set of historical processes—the emergence of fiction and poetry that self-consciously broke from classical themes and instead treated the experiences of common people. Such a change had often been attributed to the romantic school, but the data showed it playing out over a much longer period of time and continuing long after the romantics were supposedly passé. “Our vocabulary is all schools, movements, periods, cultural turns,” Underwood says. “If you have a trend that lasts a century or more, it’s really hard to grapple with.”

Digital humanities technologies can help us see gradual changes, whether in literature or elsewhere. Humans have difficulty comprehending change that happens on the time scale of a human life, or longer. If Underwood’s hypothesis is correct, we need computers to help fill in our blind spot. Topic modeling does not overturn or replace our previous ways of seeing; it enhances them. “It is not a substitute for human reading, but a prosthetic extension of our capacity,” says Johanna Drucker, a professor of information studies at UCLA.

Of course, it takes practice to get used to prosthetics. The traditional humanities taught us to read critically, to see that meanings are often hidden beneath the surface. Now, a new challenge has appeared: how to commingle the critical reading we are good at with the distant reading the computer is good at. We have started to become comfortable reading books through Kindles, iPads, and other devices, observes Matthew K. Gold, a professor of digital humanities at the Graduate Center of the City University of New York. “Are we willing to have them help us read, and help us perform the critical interpretation?” Drucker thinks that we will be. Eventually, she predicts, the digital humanities “will be part of general literacy.”

So, can a computer fathom Shakespeare’s “rooky wood”? Does the meaning of literature reside only in the words, or is it created in the human brain by the act of reading the words? Isaac Asimov’s words from I Robot come to mind: “People say ‘It’s as plain as the nose on your face.’ But how much of the nose on your face can you see, unless someone holds a mirror up to you?”

Dana Mackenzie is a freelance mathematics and science writer based in Santa Cruz, California. His most recent book is The Universe in Zero Words: The Story of Mathematics as Told Through Equations, published in 2012 by Princeton University Press.